DUC-2005 Summarization Task

(Redirected from DUC 2005 Benchmark Task)

Jump to navigation

Jump to search

A DUC-2005 Summarization Task is a NLP benchmark task that evaluates the performance of topic-focused multi-document text summarization systems.

- Context:

- It is part of the DUC Workshop Series.

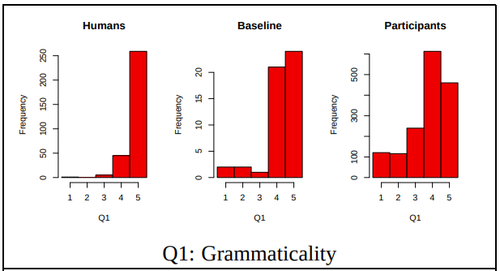

- It evaluated text summarization system using 5 linguistic quality questions which measured linguistic qualities: Grammaticality (Q1), Non-redundancy (Q2), Referential clarity (Q3), Focus (Q4), and Structure and Coherence (Q5).

- It evaluated the performance of all text summarization systems included Dang (2005).

- Example(s):

- DUC 2005 text summarization systems measured Q1:

{|style="border:2px solid #FFFFFF; text-align:center; vertical-align:center; border-spacing: 1px; width: 80%"

- DUC 2005 text summarization systems measured Q1:

- Counter-Example(s):

- See: Performance Metric, Text Summarization System, Natural Language Processing System, SQuASH Project, ROUGE.

References

2020

- (DUC, 2020) ⇒ https://duc.nist.gov/duc2005/tasks.html Retrieved: 2020-10-11.

- QUOTE: The main goals in DUC 2005 and their associated actions are listed below.

- 1) Inclusion of user/task context information for systems and human summarizers

- create DUC topics which explicitly reflect the specific interests of potential user in a task context.

- capture some general user/task preferences in a simple user profile

- 2) Evaluation of content in terms of more basic units of meaning

- develop automatic tools and/or manual procedures to identify basic units of meaning

- develop automatic tools and/or manual procedures to estimate the importance of such units based on agreement among humans

- use the above in evaluating systems;

- evaluate the new evaluation scheme(s).

- 3) Better understanding of normal human variability in a summarization task and how it may affect evaluation of summarization systems

- create as many manual reference summaries as feasible

- examine the relationship between the number of reference summaries, the ways in which they vary, and the effect of the number and variability on system evaluation (absolute scoring, relative system differences, reliability, etc.)

- 1) Inclusion of user/task context information for systems and human summarizers

2005a

- (DUC, 2005) ⇒ http://www-nlpir.nist.gov/projects/duc/duc2005/

- QUOTE: The system task in 2005 will be to synthesize from a set of 25-50 documents a brief, well-organized, fluent answer to a need for information that cannot be met by just stating a name, date, quantity, etc. This task will model real-world complex question answering

2005b

- (Dang, 2005) ⇒ Hoa Trang Dang (2005, October). "Overview of DUC 2005". In: Proceedings of the document understanding conference (Vol. 2005, pp. 1-12).

- QUOTE: The focus of DUC 2005 was on developing new evaluation methods that take into account variation in content in human-authored summaries. Therefore, DUC 2005 had a single user-oriented, question-focused summarization task that allowed the community to put some time and effort into helping with the new evaluation framework. The summarization task was to synthesize from a set of 25-50 documents a well-organized, fluent answer to a complex question. The relatively generous allowance of 250 words for each answer reveals how difficult it is for current summarization systems to produce fluent multi-document summaries.