Spark Driver Process

(Redirected from Spark driver)

Jump to navigation

Jump to search

A Spark Driver Process is a software process that manages Spark jobs and schedules Spark tasks.

- Context:

- It can (typically) be available the entire time the Spark Application is running.

- It can (typically) listen for and accept incoming connections from its Spark Executors throughout its lifetime.

- Example(s):

- …

- Counter-Example(s):

- a Spark Task.

- a Spark Executor.

- See: Spark Cluster Manager, Spark Job.

References

2016

- http://www.cloudera.com/documentation/enterprise/5-6-x/topics/cdh_ig_spark_apps.html

- QUOTE: Spark application execution involves runtime concepts such as driver, executor, task, job, and stage. Understanding these concepts is vital for writing fast and resource efficient Spark programs.

At runtime, a Spark application maps to a single driver process and a set of executor processes distributed across the hosts in a cluster.

The driver process manages the job flow and schedules tasks and is available the entire time the application is running. Typically, this driver process is the same as the client process used to initiate the job, although when run on YARN, the driver can run in the cluster. In interactive mode, the shell itself is the driver process.

The executors are responsible for executing work, in the form of tasks, as well as for storing any data that you cache. Executor lifetime depends on whether dynamic allocation is enabled. An executor has a number of slots for running tasks, and will run many concurrently throughout its lifetime.

Invoking an action inside a Spark application triggers the launch of a job to fulfill it. Spark examines the dataset on which that action depends and formulates an execution plan. The execution plan assembles the dataset transformations into stages. A stage is a collection of tasks that run the same code, each on a different subset of the data.

- QUOTE: Spark application execution involves runtime concepts such as driver, executor, task, job, and stage. Understanding these concepts is vital for writing fast and resource efficient Spark programs.

2015

- http://spark.apache.org/docs/latest/cluster-overview.html

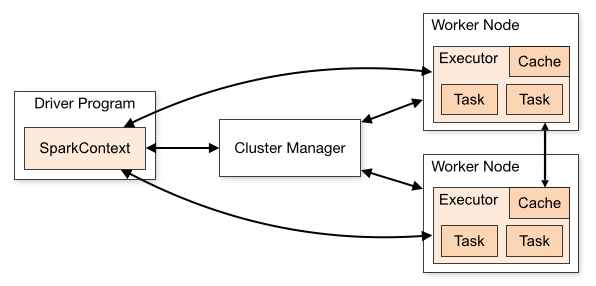

- QUOTE: Spark applications run as independent sets of processes on a cluster, coordinated by the SparkContext object in your main program (called the driver program).

Specifically, to run on a cluster, the SparkContext can connect to several types of cluster managers (either Spark’s own standalone cluster manager, Mesos or YARN), which allocate resources across applications. Once connected, Spark acquires executors on nodes in the cluster, which are processes that run computations and store data for your application. Next, it sends your application code (defined by JAR or Python files passed to SparkContext) to the executors. Finally, SparkContext sends tasks to the executors to run.

There are several useful things to note about this architecture:- Each application gets its own executor processes, which stay up for the duration of the whole application and run tasks in multiple threads. This has the benefit of isolating applications from each other, on both the scheduling side (each driver schedules its own tasks) and executor side (tasks from different applications run in different JVMs). However, it also means that data cannot be shared across different Spark applications (instances of SparkContext) without writing it to an external storage system.

- Spark is agnostic to the underlying cluster manager. As long as it can acquire executor processes, and these communicate with each other, it is relatively easy to run it even on a cluster manager that also supports other applications (e.g. Mesos/YARN).

- The driver program must listen for and accept incoming connections from its executors throughout its lifetime (e.g., see spark.driver.port and spark.fileserver.port in the network config section). As such, the driver program must be network addressable from the worker nodes.

- Because the driver schedules tasks on the cluster, it should be run close to the worker nodes, preferably on the same local area network. If you’d like to send requests to the cluster remotely, it’s better to open an RPC to the driver and have it submit operations from nearby than to run a driver far away from the worker nodes.

- QUOTE: Spark applications run as independent sets of processes on a cluster, coordinated by the SparkContext object in your main program (called the driver program).