sklearn.linear model.HuberRegressor

Jump to navigation

Jump to search

A sklearn.linear model.HuberRegressor is an Huber Regression System within sklearn.linear_model class.

- AKA: HuberRegressor, linear_model.HuberRegressor.

- Context

- Usage:

- 1) Import Huber Regression model from scikit-learn :

from sklearn.linear_model import HuberRegressor - 2) Create design matrix

Xand response vectorY - 3) Create Huber Regression object:

Hreg=HuberRegressor([epsilon=1.35, max_iter=100, alpha=0.0001, warm_start=False, fit_intercept=True, tol=1e-05]) - 4) Choose method(s):

- Fit the Huber Regression model with to the dataset:

Hreg.fit(X, Y[, check_input])) - Predict Y using the linear model with estimated coefficients:

Y_pred = Hreg.predict(X) - Return coefficient of determination (R^2) of the prediction:

Hreg.score(X,Y[, sample_weight=w]) - Get estimator parameters:

Hreg.get_params([deep]) - Set estimator parameters:

Hreg.set_params(**params)

- Fit the Huber Regression model with to the dataset:

- 1) Import Huber Regression model from scikit-learn :

- Example(s):

| Input: | Output: |

#Importing modules

#Calculaton of RMSE and Explained Variances

# Printing Results

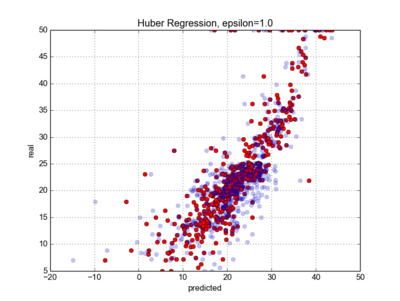

#plotting real vs predicted data

|

|

- Counter-Example(s):

- See: Regression System, Regressor, Cross-Validation Task, Ridge Regression Task, Bayesian Analysis.

References

2017

- http://scikit-learn.org/stable/modules/generated/sklearn.linear_model.HuberRegressor.html

- QUOTE:

class sklearn.linear_model.HuberRegressor(epsilon=1.35, max_iter=100, alpha=0.0001, warm_start=False, fit_intercept=True, tol=1e-05)

- QUOTE:

- Linear regression model that is robust to outliers.

- The Huber Regressor optimizes the squared loss for the samples where

|(y - X'w) / sigma| < epsilonand the absolute loss for the samples where|(y - X'w) / sigma| > epsilon, where w and sigma are parameters to be optimized. The parameter sigma makes sure that if y is scaled up or down by a certain factor, one does not need to rescale epsilon to achieve the same robustness. Note that this does not take into account the fact that the different features of X may be of different scales. - This makes sure that the loss function is not heavily influenced by the outliers while not completely ignoring their effect.