2016 DeepResidualLearningforImageRec

- (He et al., 2016) ⇒ Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. (2016). “Deep Residual Learning for Image Recognition.” In: Proceedings 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2016).

Subject Headings: Deep Residual Neural Network, Residual Neural Network (ResNet), He-Zhang-Ren-Sun Deep Residual Network.

Notes

- Version(s):

Cited By

- Google Scholar: ~ 67,762 Citations, Retrieved: 2021-01-24.

Quotes

Abstract

Deeper neural networks are more difficult to train. We present a residual learning framework to ease the training of networks that are substantially deeper than those used previously. We explicitly reformulate the layers as learning residual functions with reference to the layer inputs, instead of learning unreferenced functions. We provide comprehensive empirical evidence showing that these residual networks are easier to optimize, and can gain accuracy from considerably increased depth. On the ImageNet dataset we evaluate residual nets with a depth of up to 152 layers --- 8x deeper than VGG nets but still having lower complexity. An ensemble of these residual nets achieves 3.57% error on the ImageNet test set. This result won the 1st place on the ILSVRC 2015 classification task. We also present analysis on CIFAR-10 with 100 and 1000 layers. The depth of representations is of central importance for many visual recognition tasks. Solely due to our extremely deep representations, we obtain a 28% relative improvement on the COCO object detection dataset. Deep residual nets are foundations of our submissions to ILSVRC & COCO 2015 competitions, where we also won the 1st places on the tasks of ImageNet detection, ImageNet localization, COCO detection, and COCO segmentation.

1. Introduction

(...)

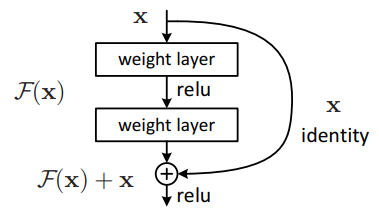

The formulation of $F(x) +x$ can be realized by feedforward neural networks with “shortcut connections” (Fig. 2). Shortcut connections (Bishop, 1995; Ripley, 1996, Venables & Ripley, 1999) are those skipping one or more layers. In our case, the shortcut connections simply perform identity mapping, and their outputs are added to the outputs of the stacked layers (Fig. 2). Identity shortcut connections add neither extra parameter nor computational complexity. The entire network can still be trained end-to-end by SGD with backpropagation, and can be easily implemented using common libraries (e.g., Caffe Jia et al., 2014) without modifying the solvers.

|

(...)

2. Related Work

3. Deep Residual Learning

3.1. Residual Learning

3.2. Identity Mapping by Shortcuts

3.3. Network Architectures

(...)

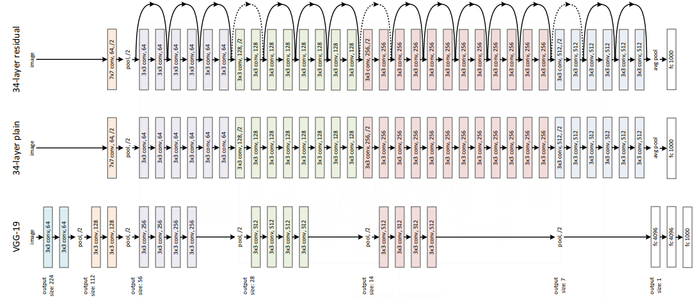

Residual Network. Based on the above plain network, we insert shortcut connections (Fig. 3, right) which turn the network into its counterpart residual version. The identity shortcuts (Eqn.(1)) can be directly used when the input and output are of the same dimensions (solid line shortcuts in Fig. 3). When the dimensions increase (dotted line shortcuts in Fig. 3), we consider two options: (A) The shortcut still performs identity mapping, with extra zero entries padded for increasing dimensions. This option introduces no extra parameter; (B) The projection shortcut in Eqn.(2) is used to match dimensions (done by 1&time;1 convolutions). For both options, when the shortcuts go across feature maps of two sizes, they are performed with a stride of 2.

|

3.4. Implementation

4. Experiments

References

2014

- (Jia et al., 2014) Y. Jia, E. Shelhamer, J. Donahue, S. Karayev, J. Long, R. Girshick, S. Guadarrama, and T. Darrell (2014). “Caffe: Convolutional architecture for fast feature embedding". In: arXiv:1408.5093.

1999

- (Venables & Ripley, 1999) ⇒ W. Venables, and B. Ripley (1999). “Modern applied statistics with s-plus".

1996

- (Ripley, 1996) ⇒ B. D. Ripley (1996). “Pattern recognition and neural networks". In:Cambridge university press.

1995

- (Bishop, 1995) ⇒ C. M. Bishop (1995). “Neural networks for pattern recognition". In: Oxford university press.

BibTeX

@inproceedings{2016_DeepResidualLearningforImageRec,

author = {Kaiming He and

Xiangyu Zhang and

Shaoqing Ren and

Jian Sun},

title = {Deep Residual Learning for Image Recognition},

booktitle = {Proceedings 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2016)},

pages = {770--778},

publisher = {IEEE Computer Society},

year = {2016},

url = {https://doi.org/10.1109/CVPR.2016.90},

doi = {10.1109/CVPR.2016.90},

timestamp = {Wed, 16 Oct 2019 14:14:50 +0200},

}

| Author | volume | Date Value | title | type | journal | titleUrl | doi | note | year | |

|---|---|---|---|---|---|---|---|---|---|---|

| 2016 DeepResidualLearningforImageRec | Kaiming He Xiangyu Zhang Shaoqing Ren Jian Sun | Deep Residual Learning for Image Recognition | 2016 |