Machine Reading System

Jump to navigation

Jump to search

A Machine Reading System is a text reading system that implements a machine reading algorithm to solve a machine reading task.

- Example(s):

- a Deep LSTM Reading System such as:

- …

- Counter-Example(s):

- See: NELL System, Deep Neural Network, Long Short Term Memory, Attention Mechanism, Artificial Neural Network.

References

2015

- (Hermann et al., 2015) ⇒ Karl Moritz Hermann, Tomas Kocisky, Edward Grefenstette, Lasse Espeholt, Will Kay, Mustafa Suleyman, and Phil Blunsom. (2015). “Teaching Machines to Read and Comprehend.” In: Proceedings of the 28th International Conference on Neural Information Processing Systems (NIPS'15). arXiv:1506.03340v3

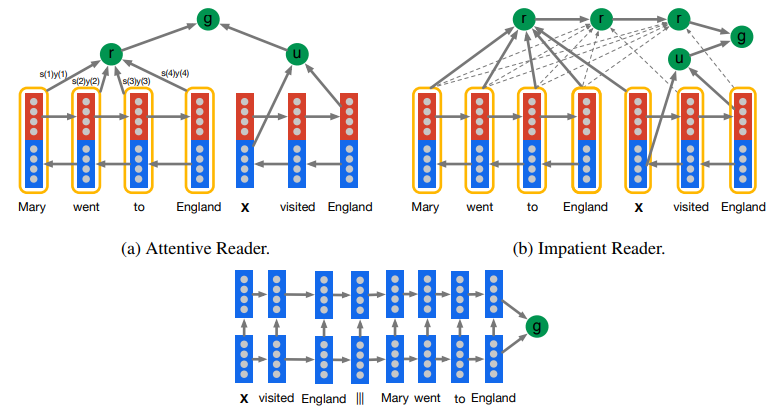

- QUOTE: The Deep LSTM Reader: Long short-term memory (LSTM, 18) networks have recently seen considerable success in tasks such as machine translation and language modelling 17. When used for translation, Deep LSTMs 19 have shown a remarkable ability to embed long sequences into a vector representation which contains enough information to generate a full translation in another language. Our first neural model for reading comprehension tests the ability of Deep LSTM encoders to handle significantly longer sequences. We feed our documents one word at a time into a Deep LSTM encoder, after a delimiter we then also feed the query into the encoder. Alternatively we also experiment with processing the query then the document. The result is that this model processes each document query pair as a single long sequence. Given the embedded document and query the network predicts which token in the document answers the query.