Neural Network Output Layer

A Neural Network Output Layer is a neural network layer that comes last that contains all output values.

- Example(s):

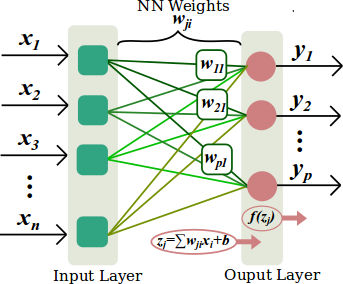

- the last neural network layer in the following Single Layer Neural Network with [math]\displaystyle{ n }[/math] neuron inputs and [math]\displaystyle{ p }[/math] neurons:

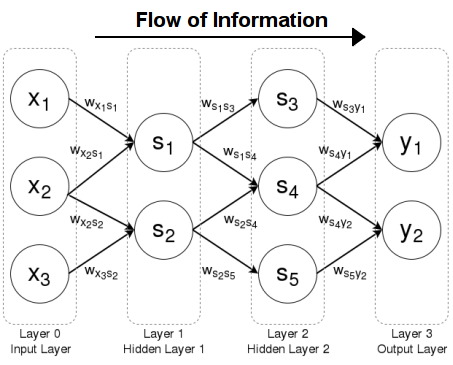

- the last neural network layer in the following Feed-Forward Neural Network:

- Counter-Example(s):

- See: Neural Network Topology, Artificial Neural Network, Neural Network Activation Function, Neural Network Transfer Function.

References

2017a

- (CS231n, 2017) ⇒ http://cs231n.github.io/neural-networks-1/#layers Retrieved: 2017-12-31

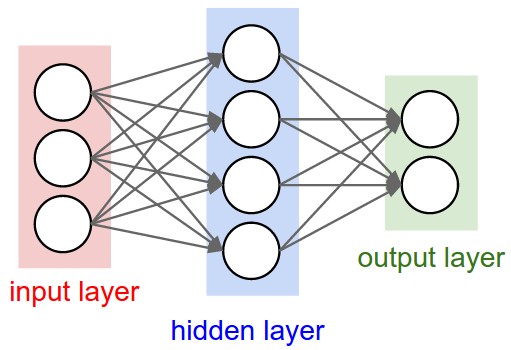

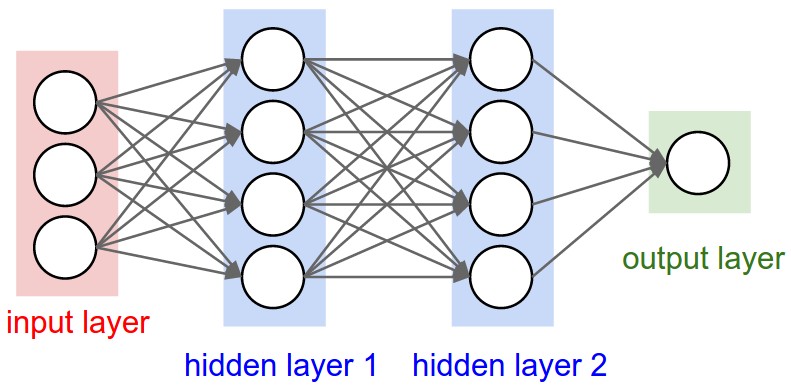

- Neural Networks as neurons in graphs. Neural Networks are modeled as collections of neurons that are connected in an acyclic graph. In other words, the outputs of some neurons can become inputs to other neurons. Cycles are not allowed since that would imply an infinite loop in the forward pass of a network. Instead of an amorphous blobs of connected neurons, Neural Network models are often organized into distinct layers of neurons. For regular neural networks, the most common layer type is the fully-connected layer in which neurons between two adjacent layers are fully pairwise connected, but neurons within a single layer share no connections. Below are two example Neural Network topologies that use a stack of fully-connected layers:

|

|

Left: A 2-layer Neural Network (one hidden layer of 4 neurons (or units) and one output layer with 2 neurons), and three inputs. Right:A 3-layer neural network with three inputs, two hidden layers of 4 neurons each and one output layer. Notice that in both cases there are connections (synapses) between neurons across layers, but not within a layer. |

- Naming conventions. Notice that when we say N-layer neural network, we do not count the input layer. Therefore, a single-layer neural network describes a network with no hidden layers (input directly mapped to output). In that sense, you can sometimes hear people say that logistic regression or SVMs are simply a special case of single-layer Neural Networks. You may also hear these networks interchangeably referred to as “Artificial Neural Networks” (ANN) or “Multi-Layer Perceptrons” (MLP). Many people do not like the analogies between Neural Networks and real brains and prefer to refer to neurons as units.

Output layer. Unlike all layers in a Neural Network, the output layer neurons most commonly do not have an activation function (or you can think of them as having a linear identity activation function). This is because the last output layer is usually taken to represent the class scores (e.g. in classification), which are arbitrary real-valued numbers, or some kind of real-valued target (e.g. in regression).

- Naming conventions. Notice that when we say N-layer neural network, we do not count the input layer. Therefore, a single-layer neural network describes a network with no hidden layers (input directly mapped to output). In that sense, you can sometimes hear people say that logistic regression or SVMs are simply a special case of single-layer Neural Networks. You may also hear these networks interchangeably referred to as “Artificial Neural Networks” (ANN) or “Multi-Layer Perceptrons” (MLP). Many people do not like the analogies between Neural Networks and real brains and prefer to refer to neurons as units.

2017b

- (Santos, 2017) ⇒ https://leonardoaraujosantos.gitbooks.io/artificial-inteligence/content/neural_networks.html Retrieved: 2018-01-07

- QUOTE: Neural networks are examples of Non-Linear hypothesis, where the model can learn to classify much more complex relations. Also it scale better than Logistic Regression for large number of features.

It's formed by artificial neurons, where those neurons are organised in layers. We have 3 types of layers:

- QUOTE: Neural networks are examples of Non-Linear hypothesis, where the model can learn to classify much more complex relations. Also it scale better than Logistic Regression for large number of features.

- We classify the neural networks from their number of hidden layers and how they connect, for instance the network above have 2 hidden layers. Also if the neural network has/or not loops we can classify them as Recurrent or Feed-forward neural networks.

Neural networks from more than 2 hidden layers can be considered a deep neural network.

- We classify the neural networks from their number of hidden layers and how they connect, for instance the network above have 2 hidden layers. Also if the neural network has/or not loops we can classify them as Recurrent or Feed-forward neural networks.

2003

- (Aryal & Wang, 2003) ⇒ Aryal, D. R., & Wang, Y. W. (2003). Neural network Forecasting of the production level of Chinese construction industry. Journal of comparative international management, 6(2).

- QUOTE: Networks layers : The most common type of artificial neural network consists of three groups, or layers, of units: a layer of “input” units is connected to a layer of “hidden” units, which is connected to a layer of “output” units. The activity of the input units represents the raw information that is fed into the network. The activity of each hidden unit is determined by the activities of the input units and the weights on the connections between the input and the hidden units. The behavior of the output units depends on the activity of the hidden units and the weights between the hidden and output units.