2017 MemoryEfficientImplementationof

- (Pleiss et al., 2017) ⇒ Geoff Pleiss, Danlu Chen, Gao Huang, Tongcheng Li, Laurens van der Maaten, and Kilian Q. Weinberger. (2017). “Memory-Efficient Implementation of DenseNets.” eprint arXiv:1707.06990.

Subject Headings: DenseNet; Dense Block; Deep Convolutional Neural Network

Notes

Cited By

Quotes

Author Keywords

Abstract

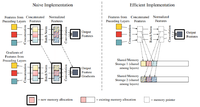

The DenseNet architecture is highly computationally efficient as a result of feature reuse. However, a naive DenseNet implementation can require a significant amount of GPU memory: If not properly managed, pre-activation batch normalization and contiguous convolution operations can produce feature maps that grow quadratically with network depth. In this technical report, we introduce strategies to reduce the memory consumption of DenseNets during training. By strategically using shared memory allocations, we reduce the memory cost for storing feature maps from quadratic to linear. Without the GPU memory bottleneck, it is now possible to train extremely deep DenseNets. Networks with 14M parameters can be trained on a single GPU, up from 4M. A 264-layer DenseNet (73M parameters), which previously would have been infeasible to train, can now be trained on a single workstation with 8 NVIDIA Tesla M40 GPUs. On the ImageNet ILSVRC classification dataset, this large DenseNet obtains a state-of-the-art single-crop top-1 error of 20.26%.

Figures

References

;

| Author | volume | Date Value | title | type | journal | titleUrl | doi | note | year | |

|---|---|---|---|---|---|---|---|---|---|---|

| 2017 MemoryEfficientImplementationof | Gao Huang Kilian Q. Weinberger Laurens van der Maaten Geoff Pleiss Danlu Chen Tongcheng Li | Memory-Efficient Implementation of DenseNets | 2017 |