Perceptron Function

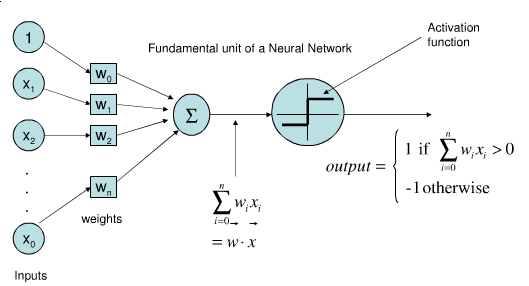

A Perceptron Function is a linear classification function composed of a parameterized weighted sum and an activation function.

- Context:

- It can be a component of a Feed-Forward Neural Network Function.

- It can range from being an Abstract Perceptron Function to being a Software Perceptron Function.

- It can range from being a Fixed-Parameter Perceptron Function to being a Free-Parameter Perceptron Function.

- It can range from (typically) being a Binary Perceptron Classifier to being a Multiclass Perceptron Classifier.

- It can be trained by a Perceptron Training System (that implements a perceptron algorithm to solve a perceptron training task).

- Example(s):

- Counter-Example(s):

- a Linear Network.

- a Multi-layer Neural Network, such as a Multi-Layer Perceptron.

- See: Single-layer Neural Network, Linear Classifier Model, Linear Predictor Function, Feature Vector, Online Algorithm, Artificial Neural Network.

References

2014

- (Wikipedia, 2014) ⇒ http://en.wikipedia.org/wiki/perceptron Retrieved:2014-8-20.

- In machine learning, the perceptron is an algorithm for supervised classification of an input into one of several possible non-binary outputs. It is a type of linear classifier, i.e. a classification algorithm that makes its predictions based on a linear predictor function combining a set of weights with the feature vector. The algorithm allows for online learning, in that it processes elements in the training set one at a time.

The perceptron algorithm dates back to the late 1950s; its first implementation, in custom hardware, was one of the first artificial neural networks to be produced.

- In machine learning, the perceptron is an algorithm for supervised classification of an input into one of several possible non-binary outputs. It is a type of linear classifier, i.e. a classification algorithm that makes its predictions based on a linear predictor function combining a set of weights with the feature vector. The algorithm allows for online learning, in that it processes elements in the training set one at a time.

2013

2003

- (Kanal, 2003) ⇒ Laveen N. Kanal. (2003). “Perceptron.” In: "Encyclopedia of Computer Science, 4th edition.” John Wiley and Sons. ISSN:0-470-86412-5 http://portal.acm.org/citation.cfm?id=1074686

- QUOTE: In 1957 the psychologist Frank Rosenblatt proposed "The Perceptron: a perceiving and recognizing automaton" as a class of artificial nerve nets, embodying aspects of the brain and receptors of biological systems. Fig. 1 shows the network of the Mark 1 Perceptron. Later, Rosenblatt protested that the term perceptron, originally intended as a generic name for a variety of theoretical nerve nets, was actually associated with a very specific piece of hardware (Rosenblatt, 1962). The basic building block of a perceptron is an element that accepts a number of inputs xi, i = 1,..., N, and computes a weighted sum of these inputs where, for each input, its fixed weight ω can be only + 1 or - 1. The sum is then compared with a threshold θ, and an output y is produced that is either 0 or 1, depending on whether or not the sum exceeds the threshold. In other words …

… A perceptron is a signal transmission network consisting of sensory units (S units), association units (A units), and output or response units (R units). The receptor of the perceptron is analogous to the retina of the eye and is made of an array of sensory elements (photocells). Depending on whether or not an S-unit is excited, it produces a binary output. A randomly selected set of retinal cells is connected to the next level of the network, the A units. Each A unit behaves like the basic building block discussed abowvhee, re the + 1, - 1 weights for the inputs to each A unit are randomly assigned. The threshold for all A units is the same.

- QUOTE: In 1957 the psychologist Frank Rosenblatt proposed "The Perceptron: a perceiving and recognizing automaton" as a class of artificial nerve nets, embodying aspects of the brain and receptors of biological systems. Fig. 1 shows the network of the Mark 1 Perceptron. Later, Rosenblatt protested that the term perceptron, originally intended as a generic name for a variety of theoretical nerve nets, was actually associated with a very specific piece of hardware (Rosenblatt, 1962). The basic building block of a perceptron is an element that accepts a number of inputs xi, i = 1,..., N, and computes a weighted sum of these inputs where, for each input, its fixed weight ω can be only + 1 or - 1. The sum is then compared with a threshold θ, and an output y is produced that is either 0 or 1, depending on whether or not the sum exceeds the threshold. In other words …

1990

- (Gallant, 1990) ⇒ S. I. Gallant. (1990). "Perceptron-based Learning Algorithms". In: IEEE Transactions on Neural Networks, 1(2). DOI: 10.1109/72.80230

- ABSTRACT: A key task for connectionist research is the development and analysis of learning algorithms. An examination is made of several supervised learning algorithms for single-cell and network models. The heart of these algorithms is the pocket algorithm, a modification of perceptron learning that makes perceptron learning well-behaved with nonseparable training data, even if the data are noisy and contradictory. Features of these algorithms include speed algorithms fast enough to handle large sets of training data; network scaling properties, i.e. network methods scale up almost as well as single-cell models when the number of inputs is increased; analytic tractability, i.e. upper bounds on classification error are derivable; online learning, i.e. some variants can learn continually, without referring to previous data; and winner-take-all groups or choice groups, i.e. algorithms can be adapted to select one out of a number of possible classifications. These learning algorithms are suitable for applications in machine learning, pattern recognition, and connectionist expert systems.

1969

- (Minsky & Papert, 1969) ⇒ Marvin L. Minsky, and S. A. Papert. (1969). “Perceptrons.” MIT Press

1962

- (Novikoff, 1962) ⇒ A. B. Novikoff. (1962). “On Convergence Proofs on Perceptrons. Symposium on the Mathematical Theory of Automata, 12.

1958

- (Rosenblatt, 1958) ⇒ Frank Rosenblatt. (1958). “The Perceptron: A Probabilistic Model for Information Storage and Organization in the Brain.” Psychological Review, 65(6).