OpenAI Codex LLM Model

(Redirected from Codex)

Jump to navigation

Jump to search

An OpenAI Codex LLM Model is an text-to-code model.

- Example(s):

- Counter-Example(s):

- See: OpenAI, GitHub Copilot, Autocompletion, Fine-Tuned DNN Model.

References

2023

- https://platform.openai.com/docs/models/codex

- QUOTE: The Codex models are now deprecated. They were descendants of our GPT-3 models that would understand and generate code. Their training data contains both natural language and billions of lines of public code from GitHub.

2023

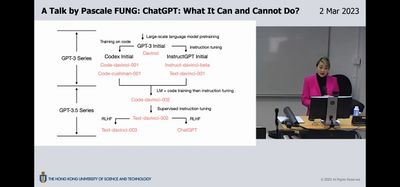

- Pascale Fung. (2023). “ChatGPT: What It Can and Cannot Do?." Presentation

2023

- (Wikipedia, 2023) ⇒ https://en.wikipedia.org/wiki/OpenAI_Codex Retrieved:2023-2-16.

- OpenAI Codex is an artificial intelligence model developed by OpenAI. It parses natural language and generates code in response. It is used to power GitHub Copilot, a programming autocompletion tool developed for select IDEs, like Visual Studio Code and Neovim. Codex is a descendant of OpenAI's GPT-3 model, fine-tuned for use in programming applications.

OpenAI has released an API for Codex in closed beta.[1]

- OpenAI Codex is an artificial intelligence model developed by OpenAI. It parses natural language and generates code in response. It is used to power GitHub Copilot, a programming autocompletion tool developed for select IDEs, like Visual Studio Code and Neovim. Codex is a descendant of OpenAI's GPT-3 model, fine-tuned for use in programming applications.

- ↑ Cite error: Invalid

<ref>tag; no text was provided for refs namedOAI

2022

- https://platform.openai.com/docs/models/codex

- QUOTE: The Codex models are descendants of our GPT-3 models that can understand and generate code. Their training data contains both natural language and billions of lines of public code from GitHub. Learn more.

They’re most capable in Python and proficient in over a dozen languages including JavaScript, Go, Perl, PHP, Ruby, Swift, TypeScript, SQL, and even Shell.

- We currently offer two Codex models:

- Latest model Description Max request Training data

- code-davinci-002 Most capable Codex model. Particularly good at translating natural language to code. In addition to completing code, also supports inserting completions within code. 8,000 tokens Up to Jun 2021

- code-cushman-001 Almost as capable as Davinci Codex, but slightly faster. This speed advantage may make it preferable for real-time applications.

- QUOTE: The Codex models are descendants of our GPT-3 models that can understand and generate code. Their training data contains both natural language and billions of lines of public code from GitHub. Learn more.

2022

- (OpenAI Blog, 2022) ⇒ "Powering Next Generation Applications with OpenAI Codex." 2022-May-24

- QUOTE: Codex is now powering 70 different applications across a variety of use cases through the OpenAI API.

- OpenAI Codex, a natural language-to-code system based on GPT-3, helps turn simple English instructions into over a dozen popular coding languages. Codex was released last August through our API and is the principal building block of GitHub Copilot.

2022

- (Ansley et al., 2022) ⇒ James Finnie-Ansley, Paul Denny, Brett A. Becker, Andrew Luxton-Reilly, and James Prather. (2022). “The Robots Are Coming: Exploring the Implications of Openai Codex on Introductory Programming.” In: Australasian Computing Education Conference, pp. 10-19.

- ABSTRACT: Recent advances in artificial intelligence have been driven by an exponential growth in digitised data. Natural language processing, in particular, has been transformed by machine learning models such as OpenAI’s GPT-3 which generates human-like text so realistic that its developers have warned of the dangers of its misuse. In recent months OpenAI released Codex, a new deep learning model trained on Python code from more than 50 million GitHub repositories. Provided with a natural language description of a programming problem as input, Codex generates solution code as output. It can also explain (in English) input code, translate code between programming languages, and more. In this work, we explore how Codex performs on typical introductory programming problems. We report its performance on real questions taken from introductory programming exams and compare it to results from students who took these same exams under normal conditions, demonstrating that Codex outscores most students. We then explore how Codex handles subtle variations in problem wording using several published variants of the well-known “Rainfall Problem” along with one unpublished variant we have used in our teaching. We find the model passes many test cases for all variants. We also explore how much variation there is in the Codex generated solutions, observing that an identical input prompt frequently leads to very different solutions in terms of algorithmic approach and code length. Finally, we discuss the implications that such technology will have for computing education as it continues to evolve, including both challenges and opportunities.

- KEYWORDS: academic integrity; AI; artificial intelligence; code generation; code writing; Codex; copilot; CS1; deep learning; introductory programming; GitHub; GPT-3; machine learning; neural networks; novice programming; OpenAI

- QUOTES:

- ... In 2021, OpenAI released Codex, a descendent of GPT-3 that was trained on an additional 159GB of Python code from >50M GitHub repositories [5]. Codex is “proficient” in over a dozen programming languages including JavaScript, Go, Perl, PHP, Ruby, Swift, TypeScript, and Shell, but is “most capable” in Python [42] ...

2021

- (Chen et al., 2021) ⇒ Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, Alex Ray, Raul Puri, Gretchen Krueger, Michael Petrov, Heidy Khlaaf, Girish Sastry, Pamela Mishkin, Brooke Chan, Scott Gray, Nick Ryder, Mikhail Pavlov, Alethea Power, Lukasz Kaiser, Mohammad Bavarian, Clemens Winter, Philippe Tillet, Felipe Petroski Such, Dave Cummings, Matthias Plappert, Fotios Chantzis, Elizabeth Barnes, Ariel Herbert-Voss, William Hebgen Guss, Alex Nichol, Alex Paino, Nikolas Tezak, Jie Tang, Igor Babuschkin, Suchir Balaji, Shantanu Jain, William Saunders, Christopher Hesse, Andrew N. Carr, Jan Leike, Josh Achiam, Vedant Misra, Evan Morikawa, Alec Radford, Matthew Knight, Miles Brundage, Mira Murati, Katie Mayer, Peter Welinder, Bob McGrew, Dario Amodei, Sam McCandlish, Ilya Sutskever, and Wojciech Zaremba. (2021). “Evaluating Large Language Models Trained on Code.” arXiv preprint arXiv:2107.03374. doi:10.48550/arXiv.2107.03374.

- ABSTRACT: We introduce Codex, a GPT language model fine-tuned on publicly available code from GitHub, and study its Python code-writing capabilities. A distinct production version of Codex powers GitHub Copilot. On HumanEval, a new evaluation set we release to measure functional correctness for synthesizing programs from docstrings, our model solves 28.8% of the problems, while GPT-3 solves 0% and GPT-J solves 11.4%. Furthermore, we find that repeated sampling from the model is a surprisingly effective strategy for producing working solutions to difficult prompts. Using this method, we solve 70.2% of our problems with 100 samples per problem. Careful investigation of our model reveals its limitations, including difficulty with docstrings describing long chains of operations and with binding operations to variables. Finally, we discuss the potential broader impacts of deploying powerful code generation technologies, covering safety, security, and economics.