2018 RecurrentNeuralNetworkAttention

- (Brown et al., 2018) ⇒ Andy Brown, Aaron Tuor, Brian Hutchinson, and Nicole Nichols. (2018). “Recurrent Neural Network Attention Mechanisms for Interpretable System Log Anomaly Detection.” In: Proceedings of the First Workshop on Machine Learning for Computing Systems (MLCS'18). ISBN:978-1-4503-5865-1 doi:10.1145/3217871.3217872

Subject Headings: Computing Methodologies ⇒ Anomaly Detection; Online Learning Settings; Feature Selection; Unsupervised Learning; Neural Networks; Machine Learning Algorithms;

Notes

Cited By

- http://scholar.google.com/scholar?q=%222018%22++Recurrent+Neural+Network+Attention+Mechanisms+for+Interpretable+System+Log+Anomaly+Detection

- http://dl.acm.org/citation.cfm?id=3217871.3217872&preflayout=flat#citedby

Quotes

Author Keywords

- Anomaly Detection; Attention; Recurrent Neural Networks; Interpretable Machine Learning; Online Training; System Log Analysis

Abstract

Deep learning has recently demonstrated state-of-the art performance on key tasks related to the maintenance of computer systems, such as intrusion detection, denial of service attack detection, hardware and software system failures, and malware detection. In these contexts, model interpretability is vital for administrator and analyst to trust and act on the automated analysis of machine learning models. Deep learning methods have been criticized as black box oracles which allow limited insight into decision factors. In this work we seek to bridge the gap between the impressive performance of deep learning models and the need for interpretable model introspection. To this end we present recurrent neural network (RNN) language models augmented with attention for anomaly detection in system logs. Our methods are generally applicable to any computer system and logging source. By incorporating attention variants into our RNN language models we create opportunities for model introspection and analysis without sacrificing state-of-the art performance. We demonstrate model performance and illustrate model interpretability on an intrusion detection task using the Los Alamos National Laboratory (LANL) cyber security dataset, reporting upward of 0.99 area under the receiver operator characteristic curve despite being trained only on a single day's worth of data.

1. Introduction

2. Related Work

...

In recent work [4, 16, 22], researchers have augmented LSTM language models with attention mechanisms in order to add capacity for modeling long term syntactic dependencies. Yogatama et al. [22] characterize attention as a differentiable random access memory. They compare attention language models with differentiable stack based memory [6] (which provides a bias for hierarchical structure), demonstrating the superiority of stack based memory on a verb agreement task with multiple attractors. Daniluk et al. [4] explore three additive attention mechanisms [2] with successive partitioning of the output of the LSTM; splitting the output into separate key, value, and prediction vectors performed best, likely due to removing the need for a single vector to encode information for multiple steps in the computation. In contrast we augment our language models with dot product attention [11, 18], but also use separate vectors for the components of our attention mechanisms.

…

3. Methods

3.1 Preliminaries

3.2 Cyber Anomaly Language Models

3.3 Attention

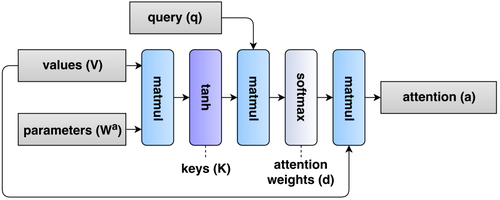

In this work we use dot product attention (Figure 3), wherein an “attention vector” a is generated from three values: 1) a key matrix $\mathbf{K}$, 2) a value matrix \mathbf{V}, and 3) a query vector \mathbf{q}. In this formulation, keys are a function of the value matrix:

| [math]\displaystyle{ \mathbf{K} = \tanh\left(\mathbf{VW}^a\right) }[/math] | (5) |

parameterized by $\mathbf{W}a$ . The importance of each timestep is determined by the magnitude of the dot product of each key vector with the query vector $\mathbf{q} \in \R^{La}$ for some attention dimension hyperparameter, $La$. These magnitudes determine the weights, $\mathbf{d}$ on the weighted sum of value vectors, $\mathbf{a}$:

| [math]\displaystyle{ \begin{align} \mathbf{d} &= \mathrm{softmax}\left(\mathbf{qKT} \right) \\ \mathbf{a} &= \mathbf{dV} \end{align} }[/math] | (6) |

| (7) |

|

3.4 Online Training

4. Experiments

4.1 Data

4.2 Experimental Setup

4.3 Metrics and Score Normalization

4.4 Results

5. Analysis

5.1 Global Behavior

5.2 Case Studies

6 Conclusions

Acknowledgments

References

;

| Author | volume | Date Value | title | type | journal | titleUrl | doi | note | year | |

|---|---|---|---|---|---|---|---|---|---|---|

| 2018 RecurrentNeuralNetworkAttention | Andy Brown Aaron Tuor Brian Hutchinson Nicole Nichols | Recurrent Neural Network Attention Mechanisms for Interpretable System Log Anomaly Detection | 10.1145/3217871.3217872 | 2018 |