Locally Weighted Regression Algorithm

A Locally Weighted Regression (LWR) Algorithm is a machine learning regression algorithm that is based on locally weighted learning.

- Context:

- It can be classified as a Lazy Model-based Regression Algorithm.

- Example(s):

- Counter-Example(s):

- See: Regression Algorithm, Locally Weighted Learning, Memory-based Learning, Least Commitment Learning, Forward Model, Inverse Model, Linear Quadratic Regulation, Shifting Setpoint Algorithm Dynamic Programming.

References

2017a

- (Ting et al., 2017) ⇒ Jo-Anne Ting, Franzisk Meier, Sethu Vijayakumar, and Stefan Schaal (2017) "Locally Weighted Regression for Control". In: Sammut & Webb. (2017).

- QUOTE: Locally weighted regression refers to supervised learning of continuous functions (otherwise known as function approximation or regression) by means of of spatially localized algorithms, which are often discussed in the context of kernel regression, nearest neighbor methods, or lazy learning (Atkeson et al. 1997). Most regression algorithms are global learning systems(...).

In contrast, local learning systems conceptually split up the global learning problem into multiple simpler learning problems. Traditional locally weighted regression approaches achieve this by dividing up the cost function into multiple independent local cost functions, (...), resulting in K (independent) local model learning problems. A different strategy for local learning starts out with the global objective (...) and reformulates it to capture the idea of local models that cooperate to generate a (global) function fit. This is achieved by assuming there are K feature functions [math]\displaystyle{ \phi_k }[/math], such that the kth feature function [math]\displaystyle{ \phi_k(X_i)=w_{k,i}X_i }[/math] (...)

In this setting, local models are initially coupled and approximations are found to decouple the learning of the local models parameters.

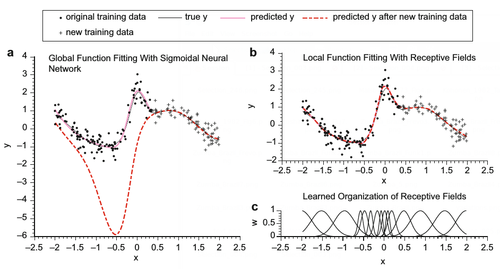

Figure 1 illustrates why locally weighted regression methods are often favored over global methods when it comes to learning from incrementally arriving data, especially when dealing with nonstationary input distributions. The figure shows the division of the training data into two sets: the “original training data” and the “new training data” (in dots and crosses, respectively).

Locally Weighted Regression for Control, Fig. 1. Function approximation results for the function [math]\displaystyle{ y= sin(2x) + 2 \exp( -16^2x) + N(0, 0.16) }[/math]with (a) a sigmoidal neural network, (b) a locally weighted regression algorithm (note that the data traces “true y,” “predicted y,” and “predicted y after new training data” largely coincide), and (c) the organization of the (Gaussian) kernels of (b) after training. See Schaal and Atkeson 1998 for more details.

- QUOTE: Locally weighted regression refers to supervised learning of continuous functions (otherwise known as function approximation or regression) by means of of spatially localized algorithms, which are often discussed in the context of kernel regression, nearest neighbor methods, or lazy learning (Atkeson et al. 1997). Most regression algorithms are global learning systems(...).

2017b

- https://www.cs.cmu.edu/afs/cs/project/jair/pub/volume4/cohn96a-html/node7.html

- QUOTE: Model-based methods, such as neural networks and the mixture of Gaussians, use the data to build a parameterized model. After training, the model is used for predictions and the data are generally discarded. In contrast, "memory-based" methods are non-parametric approaches that explicitly retain the training data, and use it each time a prediction needs to be made. Locally weighted regression (LWR) is a memory-based method that performs a regression around a point of interest using only training data that are “local to that point. One recent study demonstrated that LWR was suitable for real-time control by constructing an LWR-based system that learned a difficult juggling task [Schaal & Atkeson 1994]. ...

1997a

- (Atkeson et al., 1997) ⇒ Christopher G. Atkeson, Andrew W. Moore, and Stefan Schaal. (1997). "Locally weighted learning".

- QUOTE: In locally weighted regression (LWR) local models are fit to nearby data. As described later in this section, this can be derived by either weighting the training criterion for the local model (in the general case) or by directly weighting the data (in the case that the local model is linear in the unknown parameters). LWR is derived from standard regression procedures for global models.

1997b

- (Mitchell, 1997) ⇒ Tom M. Mitchell. (1997). “Machine Learning." McGraw-Hill.

- QUOTE: Section 8.6 Remarks on Lazy and Eager Learning: In this chapter we considered three lazy learning methods: the k-Nearest Neighbor algorithm, locally weighted regression, and case-based reasoning.