2019 BERTPreTrainingofDeepBidirectio

- (Devlin et al., 2019) ⇒ Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. (2019). “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.” In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2019). DOI:10.18653/v1/N19-1423. arXiv:1810.04805

Subject Headings: Text Data Encoding, Bi-Directional Language Model, BERT System, BERT Language Representation Model, BERT Model Instance, Transformer-based LM, Masked Language Modeling.

Notes

- BERT Source Code: http://goo.gl/language/bert

- Pre-print Version(s): Devlin et al., 2018.

Cited By

- Google Scholar: ~ 1392 Citations

- Semantic Scholar: ~ 1,367 Citations

2020

- (Diao et al., 2020) ⇒ Shizhe Diao, Jiaxin Bai, Yan Song, Tong Zhang, and Yonggang Wang. (2020). “ZEN: Pre-training Chinese Text Encoder Enhanced by N-gram Representations.” In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: Findings.

- QUOTE: Pre-trained text encoders (Peters et al., 2018b; Devlin et al., 2018; Radford et al., 2018, 2019; Yang et al., 2019) have drawn much attention in natural language processing (NLP), because state-of-the-art performance can be obtained for many NLP tasks using such encoders. In general, these [Text Encoder|encoder]]s are implemented by training a deep neural model on large unlabeled corpora.

Quotes

Abstract

We introduce a new language representation model called BERT, which stands for Bidirectional Encoder Representations from Transformers. Unlike recent language representation models, BERT is designed to pre-train deep bidirectional representations by jointly conditioning on both left and right context in all layers. As a result, the pre-trained BERT representations can be fine-tuned with just one additional output layer to create state-of-the-art models for a wide range of tasks, such as question answering and language inference, without substantial task-specific architecture modifications.

BERT is conceptually simple and empirically powerful. It obtains new state-of-the-art results on eleven natural language processing tasks, including pushing the GLUE benchmark to 80.4% (7.6% absolute improvement, MultiNLI accuracy to 86.7 (5.6% absolute improvement) and the SQuAD v1.1 question answering Test F1 to 93.2 (1.5% absolute improvement), outperforming human performance by 2.0%.

1 Introduction

Language model pre-training has been shown to be effective for improving many natural language processing tasks (Dai and Le, 2015; Peters et al., 2018a; Radford et al., 2018; Howard and Ruder, 2018). These include sentence-level tasks such as natural language inference (Bowman et al., 2015; Williams et al., 2018) and paraphrasing (Dolan and Brockett, 2005), which aim to predict the relationships between sentences by analyzing them holistically, as well as token-level tasks such as named entity recognition and question answering, where models are required to produce fine-grained output at the token level (Tjong Kim Sang and De Meulder, 2003; Rajpurkar et al., 2016).

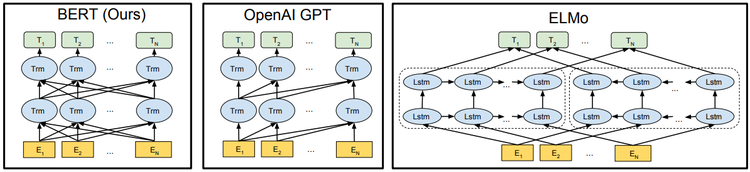

There are two existing strategies for applying pre-trained language representations to downstream tasks: feature-based and fine-tuning. The feature-based approach, such as ELMo (Peters et al., 2018a), uses task-specific architectures that include the pre-trained representations as additional features. The fine-tuning approach, such as the Generative Pre-trained Transformer (OpenAI GPT) (Radford et al., 2018), introduces minimal task-specific parameters, and is trained on the downstream tasks by simply fine-tuning the pretrained parameters.

The two approaches share the same objective function during pre-training, where they use unidirectional language models to learn general language representations.

We argue that current strategies for applying pre-trained language representations to techniques restrict the power of the pre-trained representations, especially for the fine-tuning approaches. The major limitation is that standard language models are unidirectional, and this limits the choice of architectures that can be used during pre-training. For example, in OpenAI GPT, the authors use a left-to-right architecture, where every token can only attend to previous tokens in the self-attention layers of the Transformer (Vaswani et al., 2017). Such restrictions are sub-optimal for sentence-level tasks, and could be very harmful when applying fine-tuning based approaches to token-level tasks such as question answering, where it is crucial to incorporate context from both directions.

In this paper, we improve the fine-tuning based approaches by proposing BERT: Bidirectional Encoder Representations from Transformers. BERT alleviates the previously mentioned unidirectional constraint by using a “masked language model” (MLM) pre-training objective, inspired by the Cloze task (Taylor, 1953). The masked language model randomly masks some of the tokens from the input, and the objective is to predict the original vocabulary id of the masked word based only on its context. Unlike left-to-right language model pre-training, the MLM objective enables the representation to fuse the left and the right context, which allows us to pre-train a deep bidirectional Transformer. In addition to the masked language model, we also use a “next sentence prediction” task that jointly pre-trains text-pair representations. The contributions of our paper are as follows:

- We demonstrate the importance of bidirectional pre-training for language representations. Unlike Radford et al. (2018), which uses unidirectional language models for pretraining, BERT uses masked language models to enable pre-trained deep bidirectional representations. This is also in contrast to Peters et al. (2018a), which uses a shallow concatenation of independently trained left-to-right and right-to-left LMs.

- We show that pre-trained representations reduce the needs for many heavily-engineered task-specific architectures. BERT is the first fine-tuning based representation model that achieves state-of-the-art performance on a large suite of sentence-level and token-level tasks, outperforming many systems with task-specific architectures.

- BERT advances the state of the art for eleven NLP tasks. The code and pre-trained models are available at https://github.com/google-research/bert.

2 Related Work

There is a long history of pre-training general language representations, and we briefly review the most popular approaches in this section.

2.1 Feature-based Approaches

Learning widely applicable representations of words has been an active area of research for decades, including non-neural (Brown et al., 1992; Ando and Zhang, 2005; Blitzer et al., 2006) and neural (Mikolov et al., 2013; Pennington et al., 2014) methods. Pretrained word embeddings are an integral part of modern NLP systems, offering significant improvements over embeddings learned from scratch (Turian et al., 2010). To pretrain word embedding vectors, left-to-right language modeling objectives have been used (Mnih and Hinton, 2009), as well as objectives to discriminate correct from incorrect words in left and right context (Mikolov et al., 2013).

These approaches have been generalized to coarser granularities, such as sentence embeddings (Kiros et al., 2015; Logeswaran and Lee, 2018) or paragraph embeddings (Le and Mikolov, 2014). To train sentence representations, prior work has used objectives to rank candidate next sentences (Jernite et al., 2017; Logeswaran and Lee, 2018), left-to-right generation of next sentence words given a representation of the previous sentence (Kiros et al., 2015), or denoising autoencoder derived objectives (Hill et al., 2016).

ELMo and its predecessor (Peters et al., 2017, 2018a) generalize traditional word embedding research along a different dimension. They extract context-sensitive features from a left-to-right and a right-to-left language model. The contextual representation of each token is the concatenation of the left-to-right and right-to-left representations. When integrating contextual word embeddings with existing task-specific architectures, ELMo advances the state of the art for several major NLP benchmarks (Peters et al., 2018a) including question answering (Rajpurkar et al., 2016), sentiment analysis (Socher et al., 2013), and named entity recognition (Tjong Kim Sang and De Meulder, 2003). Melamud et al. (2016) proposed learning contextual representations through a task to predict a single word from both left and right context using LSTMs. Similar to ELMo, their model is feature-based and not deeply bidirectional. Fedus et al. (2018) shows that the cloze task can be used to improve the robustness of text generation models.

2.2 Fine-tuning Approaches

As with the feature-based approaches, the first works in this direction only pre-trained word embedding parameters from unlabeled text (Collobert and Weston, 2008).

More recently, sentence or document encoders which produce contextual token representations have been pre-trained from unlabeled text and fine-tuned for a supervised downstream task (Dai and Le, 2015; Howard and Ruder 2018; Radford et al., 2018). The advantage of these approaches is that few parameters need to be learned from scratch. At least partly due to this advantage, OpenAI GPT (Radford et al., 2018) achieved previously state-of-the-art results on many sentence-level tasks from the GLUE benchmark (Wang et al., 2018a). Left-to-right language modeling and auto-encoder objectives have been used for pre-training such models (Howard and Ruder, 2018; Radford et al., 2018; Dai and Le, 2015).

2.3 Transfer Learning from Supervised Data

There has also been work showing effective transfer from supervised tasks with large datasets, such as natural language inference (Conneau et al., 2017) and machine translation (McCann et al., 2017). Computer vision research has also demonstrated the importance of transfer learning from large pre-trained models, where an effective recipe is to fine-tune models pre-trained with ImageNet (Deng et al., 2009; Yosinski et al., 2014).

3 BERT

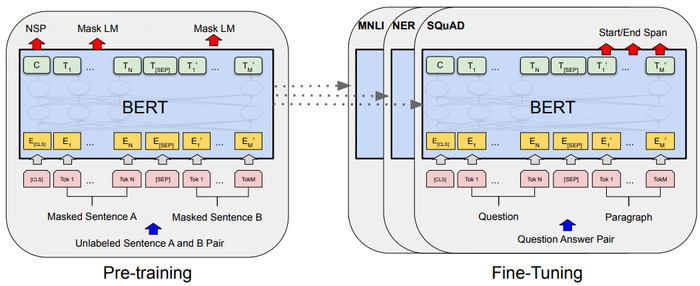

We introduce BERT and its detailed implementation in this section. There are two steps in our framework: pre-training and fine-tuning. During pre-training, the model is trained on unlabeled data over different pre-training tasks. For fine-tuning, the BERT model is first initialized with the pre-trained parameters, and all of the parameters are fine-tuned using labeled data from the downstream tasks. Each downstream task has separate fine-tuned models, even though they are initialized with the same pre-trained parameters. The question-answering example in Figure 1 will serve as a running example for this section.

A distinctive feature of BERT is its unified architecture across different tasks. There is minimal difference between the pre-trained architecture and the final downstream architecture.

Model Architecture: BERT’s model architecture is a multi-layer bidirectional Transformer encoder based on the original implementation described in Vaswani et al. (2017) and released in the tensothensor library[1]. Because the use of Transformers has become common and our implementation is almost identical to the original, we will omit an exhaustive background description of the model architecture and refer readers to Vaswani et a1. (2017) as well as excellent guides such as “The Annotated Transformer"[2].

In this work, we denote the number of layers (i.e., Transformer blocks) as L, the hidden size as H , and the number of self-attention heads as A [3]. We primarily report results on two model sizes: BERTBASE (L=12, H=768, A=12, Total Parameters=110M) and BERTLARGE (L=24, H=1024, A: 16, Total Parameters=340M).

BERTBASE was chosen to have the same model size as OpenAI GPT for comparison purposes. Critically, however, the BERT Transformer uses bidirectional self-attention, while the GPT Transformer uses constrained self-attention where every token can only attend to context to its left [4].

Input/Output Representations: To make BERT handle a variety of down-stream tasks, our input representation is able to unambiguously represent both a single sentence and a pair of sentences (e.g., [math]\displaystyle{ \langle \text{Question, Answer}\rangle }[/math]) in one token sequence. Throughout this work, a “sentence" can be an arbitrary span of contiguous text, rather than an actual linguistic sentence. A “sequence" refers to the input token sequence to BERT, which may be a single sentence or two sentences packed together.

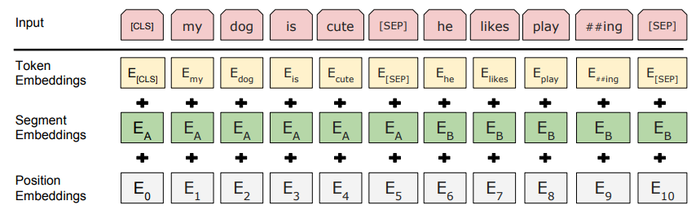

We use WordPiece embeddings (Wu et a1., 2016) with a 30,000 token vocabulary. The first token of every sequence is always a special classification token ([CLS]). The final hidden state corresponding to this token is used as the aggregate sequence representation for classification tasks. Sentence pairs are packed together into a single sequence.[[We differentiate the sentences in two ways. First, we separate them with a special token ([SEP] ). Second, we add a learned embedding to every t[oken]] indicating whether it belongs to sentence A or sentence B. As shown in Figure 1, we denote input embedding as E, the final hidden vector of the special [CLS] token as [math]\displaystyle{ C \in \mathbb{R}^H }[/math], and the final hidden vector for the ith input token as [math]\displaystyle{ T_i \in \mathbb{R}^H }[/math].

For a given token, its input representation is constructed by summing the corresponding token, [[segment embedding|segment], and position embeddings. A visualization of this construction can be seen in Figure 2.

3.1 Pre-training BERT

Unlike Peters et al. (2018a) and Radford et al. (2018), we do not use traditional left-to-right or right-to-left language models to pre-train BERT. Instead, we pre-train BERT using two unsupervised tasks, described in this section. This step is presented in the left part of Figure 1.

Task #1: Masked LM Intuitively, it is reasonable to believe that a [deep bidirectional model]] is strictly more powerful than either a left-to-right model or the [shallow concatenation]] of a [l[eft-to-right]] and a right-to-left model. Unfortunately, standard conditional language models can only be trained left-to-right or right-to-left, since bidirectional conditioning would allow each word to indirectly “see itself", and the model could trivially predict the target word in a multi-layered context.

In order to train a deep bidirectional representation, we simply [[mask] some percentage of the input tokens at random, and then predict those masked tokens. We refer to this procedure as a “masked LM" (MLM), although it is often referred to as a Cloze task in the literature (Taylor, 1953). In this case, the final hidden vectors corresponding to the mask tokens are fed into an output softmax over the [vocabulary]], as in a standard LM. In all of our experiments, we mask 15% of all WordPiece tokens in each sequence at random. In contrast to denoising auto-encoders (Vincent et al., 2008), we only predict the [masked word]]s rather than reconstructing the entire input.

Although this allows us to obtain a bidirectional pre-trained model, a downside is that we are creating a m[ismatch]] between pre-training and fine-tuning, since the [MASK] token does not appear during fine-tuning. To mitigate this, we do not always replace “masked" words with the actual [MASK] token. The training data generator chooses 15% of the token positions at random for prediction. If the i-th token is chosen, we replace the i-th token with (1) the [MASK] token 80% of the time (2) a random token 10% of the time (3) the unchanged i-th token 10% of the time. Then, [math]\displaystyle{ T_i }[/math] will be used to predict the original [token]] with cross entropy loss. We compare [variation]]s of this procedure in Appendix C.2.

Task #2: Next Sentence Prediction (NSP) Many important downstream tasks such as Question Answering (QA) and Natural Language Inference (NLI) are based on understanding the relationship between two sentences, which is not directly captured by language modeling. In order to train a model that understands sentence relationships, we [[pre-train] for a binarized next sentence prediction task that can be trivially generated from any monolingual corpus. Specifically, when choosing the sentences A and B for each pre-training example, 50% of the time B is the actual next sentence that follows A (labeled as IsNext), and 50% of the time it is a random sentence from the corpus (labeled as NotNext). As we show in Figure 1, C is used for next sentence prediction (NSP)[5]. Despite its simplicity, we demonstrate in Section 5.1 that pre-training towards this task is very beneficial to both [[QA] and NLI [6].

The NSP task is closely related to representation-learning objectives used in Jernite et al. (2017) and Logeswaran and Lee (2018). However, in prior work, only sentence embeddings are transferred to [down-stream task]]s, where BERT transfers all parameters to initialize end-task model [[parameter]s.

Pre-training data The pre-training procedure largely follows the existing [[literature] on language model pre-training. For the pre-training corpus we use the BooksCorpus (800M [[word]s) (Zhu et a1., 2015) and English Wikipedia (2,500M words). For Wikipedia we extract only the [[text passage]s and ignore [l[ist]]s, [[table]s], and headers. It is critical to use a document—level corpus rather than a shuffled sentence-level corpus such as the Billion Word Benchmark (Chelba et al., 2013) in order to extract long contiguous sequences.

3.2 Fine-tuning BERT

Fine-tuning is straightforward since the self- attention mechanism in the Transformer allows BERT to [model]] many downstream tasks -- whether they involve single text or text pairs -- by swapping out the appropriate inputs and outputs. For applications involving text pairs, a common pattern is to independently encode text pairs before applying bidirectional cross attention, such as Parikh et al. (2016); Seo et a1. (2017). BERT instead uses the self-attention mechanism to unify these two stages, as encoding a concatenated text pair with self-attention effectively includes bidirectional cross attention between two [sentence]]s. For each task, we simply plug in the task-specific inputs and outputs into BERT and fine-tune all the parameters end-to-end. At the input, sentence A and sentence B from pre-training are analogous to (1) sentence pairs in paraphrasing, (2) hypothesis-premise pairs in entailment, (3) question-passage pairs in question answering, and (4) a degenerate text-∅ pair in text classification or sequence tagging. At the output, the token representations are fed into an output layer for token-level tasks, such as sequence tagging or question answering, and the [CLS] representation is fed into an output layer for classification, such as entailment or sentiment analysis.

Compared to pre-training, [[fine-tuning[[is relatively inexpensive. All of the results in the paper can be replicated in at most 1 hour on a single Cloud TPU, or a few hours on a GPU, starting from the exact same pre-trained model[7]. We describe the task-specific details in the corresponding subsections of Section 4. More details can be found in Appendix A5.

4 Experiments

In this section, we present BERT fine-tuning results on 11 NLP tasks.

4.1 GLUE

The General Language Understanding Evaluation (GLUE) benchmark (Wang et a1., 2018a) is a collection of diverse natural language understanding tasks. Detailed descriptions of GLUE datasets are included in Appendix B.1.

To fine-tune on GLUE, we represent the input sequence (for single sentence or sentence pairs) as described in Section 3, and use the final hidden vector [math]\displaystyle{ C \in \mathbb{R}^H }[/math] corresponding to the first input token ([CLS] ) as the aggregate representation. The only new parameters introduced during fine-tuning are classification layer weights [math]\displaystyle{ W \in \mathbb{R}^{K\times H} }[/math] , where [math]\displaystyle{ K }[/math] is the number of labels. We compute a standard classification loss with [math]\displaystyle{ C }[/math] and [math]\displaystyle{ W }[/math], i.e., [math]\displaystyle{ \log(softmax(CW^T)) }[/math].

We use a batch size of 32 and fine-tune for 3 [[epoch]s over the data for all GLUE tasks. For each task, we selected the best fine-tuning learning rate (among 5e-5, 4e-5, 3e-5, and 2e-5) on the Dev set. Additionally, for BERTLARGE we found that fine-tuning was sometimes unstable on small datasets, so we ran several random restarts and selected the best model on the Dev set. With random restarts, we use the same pre-trained checkpoint but perform different fine-tuning data shuffling and classifier layer initialization [8].

Results are presented in Table 1. Both BERTBASE and BERTLARGE outperform all systems on all [task]]s by a substantial margin, obtaining 4.5% and 7.0% respective average accuracy improvement over the prior state of the art. Note that BERTBASE and OpenAI GPT are nearly identical in terms of model architecture apart from the attention masking. For the largest and most widely reported GLUE task, MNLI, BERT obtains a 4.6% absolute accuracy improvement. On the official GLUE leaderboard [9], BERTLARGE obtains a score of 80.5, compared to OpenAI GPT, which obtains 72.8 as of the date of writing.

We find that BERTLARGE significantly [[outperforms BERTBASE across all tasks, especially those with very little training data. The effect of model size is explored more thoroughly in Section 5.2.

| System | MNLI—(m/mm) 392k |

QQP 363k |

QNLI 108k |

SST—2 67k |

CoLA 8.5k |

STS—B 5.7k |

MRPC 3.5k |

RTE 2.5k |

Average − |

| Pre—OpenAI SOTA | 80.6/80.1 | 66.1 | 82.3 | 93.2 | 35.0 | 81.0 | 86.0 | 61.7 | 74.0 |

| BiLSTM+ELMo+Attn | 76.4/76.1 | 64.8 | 79.8 | 90.4 | 36.0 | 73.3 | 84.9 | 56.8 | 71.0 |

| OpenAI GPT | 82.1/81.4 | 70.3 | 87.4 | 91.3 | 45.4 | 80.0 | 82.3 | 56.0 | 75.1 |

| BERTBASE | 84.6/83.4 | 71.2 | 90.5 | 93.5 | 52.1 | 85.8 | 88.9 | 66.4 | 79.6 |

| BERTLARGE | 86.7/85.9 | 72.1 | 92.7 | 94.9 | 60.5 | 86.5 | 89.3 | 70.1 | 82.1 |

4.2 SQuAD V1.1

The Stanford Question Answering Dataset (SQuAD V1.1) is a collection of 100k crowdsourced question/answer pairs (Rajpurkar et a1., 2016). Given a question and a passage from Wikipedia containing the [answer]], the task is to predict the answer text span in the passage.

As shown in Figure 1, in the question-answering task, we represent the input question and passage as a single packed sequence, with the question using the A embedding and the passage using the B embedding. We only introduce a start vector [math]\displaystyle{ S \in \mathbb{R}^H }[/math] and an end vector [math]\displaystyle{ E \in \mathbb{R}^H }[/math] during fine-tuning. The probability of word [math]\displaystyle{ i }[/math] being the start of the answer span is computed as a dot product between [math]\displaystyle{ T_i }[/math] and [math]\displaystyle{ S }[/math] followed by a softmax over all of the[ word]]s in the paragraph: [math]\displaystyle{ P_i = \dfrac{e^{S\cdot T_i}}{\sum_j e^{S\cdot T_j}} }[/math]. The analogous formula is used for the end of the answer span. The score of a candidate span from position [math]\displaystyle{ i }[/math] to position [math]\displaystyle{ j }[/math] is defined as [math]\displaystyle{ S\cdot T_i + E \cdot T_j }[/math], and the maximum scoring span where [math]\displaystyle{ j \geq i }[/math] is used as a prediction. The training objective is the sum of the log-likelihoods of the correct start and end positions. We [fine-tune]] for 3 epochs with a learning rate of 5e-5 and a batch size of 32.

Table 2 shows top leaderboard entries as well as results from top published systems (Seo et al., 2017; Clark and Gardner, 2018; Peters et al., 2018a; Hu et al., 2018). The top results from the SQuAD leaderboard do not have up-to-date public system descriptions available [11], and are allowed to use any public data when training their systems. We therefore use modest data augmentation in our system by first fine-tuning on TriviaQA (Joshi et al., 2017) before fine-tuning on SQuAD.

Our best performing system outperforms the top leaderboard system by +1.5 F1 inensembling and +1.3 F1 as a single system. In fact, our single BERT model outperforms the top ensemble system in terms of F1 score. Without [TriviaQA]] fine-tuning data, we only lose 0.1-0.4 F1, still outperforming all existing [system]]s by a wide margin.[12].

| System | Dev | Test | ||

| EM | F1 | EM | F1 | |

| Top Leaderboard Systems (Dec 10th, 2018) | ||||

| Human | ─ | ─ | 82.3 | 91.2 |

| #1 Ensemble-nlnet | ─ | ─ | 86.0 | 91.7 |

| #2 Ensemble-QANet | ─ | ─ | 84.5 | 90.5 |

| Published | ||||

| BiDAF+ELMo (Single) | ─ | 85.6 | ─ | 85.8 |

| R.M. Reader (Ensemble) | 81.2 | 87.9 | 82.3 | 88.5 |

| Ours | ||||

| BERTBASE(Single) | 80.8 | 88.5 | ─ | ─ |

| BERTLARGE(Single) | 84.1 | 90.9 | ─ | ─ |

| BERTLARGE(Ensemble) | 85.8 | 91.8 | ─ | ─ |

| BERTLARGE(Sgl.+TriviaQA) | 84.2 | 91.1 | 85.1 | 91.8 |

| BERTLARGE(Ens.+TriviaQA) | 86.2 | 92.2 | 87.4 | 93.2 |

4.3 SQuAD v2.0

The SQuAD 2.0 task extends the SQuAD 1.1 [problem definition]] by allowing for the possibility that no short answer exists in the provided paragraph, making the problem more realistic.

We use a simple approach to extend the SQuAD V1.1 BERT model for this task. We treat questions that do not have an [answer]] as having an answer span with start and end at the [CLS] token. The probability space for the start and end [answer span position]]s is extended to include the position of the [CLS] token. For prediction, we compare the score of the no-answer span:[math]\displaystyle{ S_{null} = S \cdot C + E \cdot C }[/math] to the score of the best non-null span [math]\displaystyle{ s_{\hat{i}j} = \displaystyle \underset{j\geq i}{max} \; S \cdot T_i + E \cdot T_j }[/math]. We predict a non-null answer when [math]\displaystyle{ s_{\hat{i}j} = s_{null} + \tau }[/math], where the threshold [math]\displaystyle{ \tau }[/math] is selected on the dev set to maximize F1. We did not use TriviaQA data for this model. We fine-tuned for 2 epochs with a learning rate of 5e-5 and a batch size of 48.

The results compared to prior leaderboard entries and top published work (Sun et a1., 2018; Wang et al., 2018b) are shown in Table 3, excluding systems that use BERT as one of their components. We observe a +5.1 F1 improvement over the previous best system.

| System | Dev | Test | ||

| EM | F1 | EM | F1 | |

| Top Leaderboard Systems (Dec 10th, 2018) | ||||

| Human | 86.3 | 89.0 | 86.9 | 89.5 |

| #1 Single-MIR-MRC (F—Net) | ─ | ─ | 74.8 | 78.0 |

| #2 Single-nlnet | ─ | ─ | 74.2 | 77.1 |

| Published | ||||

| unet (Ensemble) | ─ | ─ | 71.4 | 74.9 |

| SLQA+ (Single) | ─ | 71.4 | 74.4 | |

| Ours | ||||

| BERTLARGE(Single) | 78.7 | 81.9 | 80.0 | 83.1 |

4.4 SWAG

The Situations With Adversarial Generations (SWAG) dataset contains 113k sentence-pair completion examples that evaluate grounded common-sense inference (Zellers et al., 2018). Given a sentence, the task is to choose the most plausible continuation among four choices.

When fine-tuning on the SWAG dataset, we construct four input sequences, each containing the concatenation of the given sentence (sentence A) and a possible continuation (sentence B). The only task-specific parameters introduced is a vector whose dot product with the [CLS] token representation [math]\displaystyle{ C }[/math] denotes a [[score] for each choice which is normalized with a softmax layer.

We fine-tune the model for 3 [e[poch]]s with a learning rate of 2e-5 and a batch size of 16. Results are presented in Table 4. BERTLARGE outperforms the authors’ baseline ESIM+ELMO system by +27.1% and OpenAI GPT by 8.3%.

| System | Dev | Test |

| ES IM+GloVe | 51.9 | 52.7 |

| ESIM+ELMo | 59.1 | 59.2 |

| OpenAI GPT | ─ | 78.0 |

| BERTBASE | 81.6 | ─ |

| BERTLARGE | 86.6 | 86.3 |

| Human (expert)☨ | ─ | 85.0 |

| Human (5 annotations)☨ | ─ | 88.0 |

5 Ablation Studies

In this section, we perform ablation experiments over a number of facets of BERT in order to better understand their relative importance. Additional ablation studies can be found in Appendix C.

5.1 Effect of Pre-training Tasks

We demonstrate the importance of the deep bidirectionality of BERT by evaluating two pre-training objectives using exactly the same pre-training data, fine-tuning scheme, and hyperparameters as BERTBASE:

No NSP: A [[bidirectional mode]l] which is trained using the “masked L " (MLM) but without the “next sentence prediction" (NSP) task.

LTR & No NSP: A left-context-only model which is trained using a stand Left-to-Right (LTR) LM, rather than an MLM. The left-only constraint was also applied at fine-tuning, because removing it introduced a pre-train/fine-tune mismatch that degraded downstream performance. Additionally, this model was pre-trained without the NSP task. This is directly comparable to OpenAI GPT, but using our larger training dataset, our input representation, and our fine-tuning scheme.

We first examine the impact brought by the NSP task. In Table 5, We show that removing NSP hurts performance significantly on QNLI, MVINLI, and SQuAD 1.1. Next, we evaluate the impact of training bidirectional representations by comparing “No NSP” to “LTR & No NSP ". The LTR model performs worse than the MLM model on all tasks, with large drops on MRPC and SQuAD.

For SQuAD it is intuitively clear that a LTR model will perform poorly at token predictions, since the token-level hidden states have no right-side context. In order to make a good faith attempt at strengthening the LTR system, we added a randomly initialized BiLSTM on top. This does significantly improve results on SQuAD, but the results are still far worse than those of the pre-trained bidirectional models. The BiLSTM hurts [performance]] on the GLUE tasks.

We recognize that it would also be possible to train separate LTR and RTL models and represent each token as the concatenation of the two models, as ELMO does. However: (a) this is twice as expensive as a single bidirectional model; (b) this is non-intuitive for tasks like QA, since the RTL model would not be able to condition the answer on the question; (c) this it is strictly less powerful than a deep bidirectional model, since it can use both left and right context at every layer.

| Dev Set | |||||

| Tasks | MNLI—m (Acc) |

QNLI (Acc) |

MRPC (Acc) |

SST—2 (Acc) |

SQuAD (F1) |

| BERTBASE | 84.4 | 88.4 | 86.7 | 92.7 | 88.5 |

| No NSP | 83.9 | 84.9 | 86.5 | 92.6 | 87.9 |

| LTR & No NSP | 82.1 | 84.3 | 77.5 | 92.1 | 77.8 |

| +BiLSTM | 82.1 | 84.1 | 75.7 | 91.6 | 84.9 |

5.2 Effect of Model Size

In this section, we explore the effect of model size on fine-tuning task accuracy. We trained a number of BERT models with a differing number of layers, hidden units, and attention heads, while otherwise using the same hyperparameters and training procedure as described previously.

Results on selected GLUE tasks are shown in able 6. In this table, we report the average Dev Set accuracy from 5 random restarts of fine-tuning. We can see that larger models lead to a strict accuracy improvement across all four datasets, even for MRPC which only has 3,600 labeled training examples, and is substantially different from the pre-training tasks. It is also perhaps surprising that we are able to achieve such significant improvements on top of models which are already quite large relative to the existing literature. For example, the largest Transformer explored in Vaswani et al. (2017) is (L=6, H=1024, A=16) with 100M parameters for the encoder, and the largest Transformer we have found in the literature is (L=64, H=512, A=2) with 235M parameters (Al-Rfou et a1., 2018). By contrast, BERTBASE contains 110M parameters and BERTLARGE contains 340M parameters.

It has long been known that increasing the model size will lead to continual improvements on large-scale tasks such as machine translation and language modeling, which is demonstrated by the LM perplexity of held-out training data shown in Table 6. However, we believe that this is the first work to demonstrate convincingly that scaling to extreme model sizes also leads to large improvements on very small scale tasks, provided that the model has been sufficiently pre-trained. Peters et al. (2018b) presented mixed results on the downstream task impact of increasing the pre-trained bi-LM size from two to four layers and Melamud et al. (2016) mentioned in passing that increasing hidden dimension size from 200 to 600 helped, but increasing further to 1,000 did not bring further improvements. Both of these prior works used a feature - based approach - - we hypothesize that when the model is fine-tuned directly on the downstream tasks and uses only a very small number of randomly initialized additional parameters, the task-specific models can benefit from the larger, more expressive pre-trained representations even when downstream task data is very small.

| Hyperparams | Dev Set Accuracy | |||||

| #L | #H | #A | LM(ppl) | MNLI—m | MRPC | SST—2 |

| 3 | 768 | 12 | 5.84 | 77.9 | 79.8 | 88.4 |

| 6 | 768 | 3 | 5.24 | 80.6 | 82.2 | 90.7 |

| 6 | 768 | 12 | 4.68 | 81.9 | 84.8 | 91.3 |

| 12 | 768 | 12 | 3.99 | 84.4 | 86.7 | 92.9 |

| 12 | 1024 | 16 | 3.54 | 85.7 | 86.9 | 93.3 |

| 24 | 1024 | 16 | 3.23 | 86.6 | 87.8 | 93.7 |

5.3 Feature-based Approach with BERT

All of the BERT results presented so far have used the fine-tuning approach, where a simple classification layer is added to the pre-trained model, and all parameters are jointly fine-tuned on a down-stream task. However, the feature-based approach, where fixed features are extracted from the pre-trained model, has certain advantages. First, not all tasks can be easily represented by a Transformer encoder architecture, and therefore require a task-specific model architecture to be added. Second, there are major computational benefits to pre-compute an expensive representation of the training data once and then run many experiments with cheaper models on top of this representation.

In this section, we compare the two approaches by applying BERT to the CoNLL-2003 Named Entity Recognition (NER) task (Tjong Kim Sang and De Meulder, 2003). In the input to BERT, we use a case-preserving WordPiece model, and we include the maximal document context provided by the data. Following standard practice, we formulate this as a tagging task but do not use a CRF layer in the output. We use the representation of the first sub-token as the input to the token-level classifier over the NER label set.

To ablate the fine-tuning approach, we apply the feature-based approach by extracting the activations from one or more layers without fine-tuning any parameters of BERT. These contextual embeddings are used as input to a randomly initialized two-layer 768-dimensional BiLSTM before the classification layer.

Results are presented in Table 7. BERTLARGE performs competitively with state-of-the-art methods. The best performing method concatenates the token representations from the top four hidden layers of the pre-trained Transformer, which is only 0.3 F1 behind fine-tuning the entire model. This demonstrates that BERT is effective for both fine-tuning and feature-based approaches.

| System | Dev F1 | Test F1 |

|---|---|---|

| ELMO (Peters et al., 2018a) | 95.7 | 92.2 |

| CVT (Clark et a1., 2018) | _ | 92.6 |

| CSE (Akbik et a1., 2018) | _ | 93.1 |

| Fine-tuning approach | ||

| BERTLARGE | 96.6 | 92.8 |

| BERTBASE | 96.4 | 92.4 |

| Feature-based approach (BERTBASE) | ||

| Embeddings | 91.0 | _ |

| Second-to-Last Hidden | 95.6 | _ |

| Last Hidden | 94.9 | _ |

| Weighted Sum Last Four Hidden | 95.9 | _ |

| Concat Last Four Hidden | 96.1 | _ |

| Weighted Sum All 12 Layers | 95.5 | _ |

6 Conclusion

Recent empirical improvements due to transfer learning with [[language model][s have demonstrated that rich, unsupervised pre-training is an integral part of many language understanding systems. In particular, these results enable even low-resource tasks to benefit from deep unidirectional architectures. Our major contribution is further generalizing these findings to deep bidirectional architectures, allowing the same pre-trained model to successfully tackle a broad set of NLP tasks.

Appendices

We organize the appendix into three sections:

- Additional implementation details for BERT are presented in Appendix A;

- Additional details for our experiments are presented in Appendix B; and

- Additional ablation studies are presented in Appendix C.

We present additional ablation studies for BERT including:

- Effect of Number of Training Steps; and

- Ablation for Different Masking Procedures.

A Additional Details for BERT

A.1 Illustration of the Pre-training Tasks

…

A.4 Comparison of BERT, ELMo , and OpenAI GPT

Here we studies the differences in recent popular representation learning models including ELMo, OpenAI GPT and BERT. The comparisons between the model architectures are shown visually in Figure 3. Note that in addition to the architecture differences, BERT and OpenAI GPT are fine-tuning approaches, while ELMo is a feature-based approach.

…

…

Footnotes

- ↑ https://github.com/tensorflow/tensothensor

- ↑ http://nlp.seas.harvard.edu/2018/04/03/attention.html

- ↑ In all cases we set the feed-forward/filter size to be 4H, i.e., 3072 for the H= 768 and 4096 for the H= 1024.

- ↑ We note that in the literature the bidirectional Transformer is often referred to as a “Transformer encoder” while the left-context-only version is referred to as a “Transformer decoder” since it can be used for text generation.

- ↑ The final [[model] achieves 97%—98% accuracy on NSP.

- ↑ The vector C is not a meaningful sentence representation without fine-tuning, since it was trained with NSP.

- ↑ For example, the BERT SQuAD model can be [[train]ed in around 30 minutes on a single Cloud TPU to achieve a Dev F1 score of 91.0%.

- ↑ The GLUE data set distribution does not include the Test labels, and we only made a single GLUE evaluation server submission for each of BERTBASE and BERTLARGE.

- ↑ https://gluebenchmark.com/leaderboard

- ↑ See (10) in https://g1uebenchmark.com/faq.

- ↑ QANet is described in Yu et a1. (2018), but the system has improved substantially after publication.

- ↑ . The TriviaQA data we used consists of paragraphs from TriviaQA—Wiki formed of the first 400 tokens in documents, that contain at least one of the provided possible [answer]]s

References

- (Al-Rfou et al., 2018) ⇒ Rami Al-Rfou, Dokook Choe, Noah Constant, Mandy Guo, and Llion Jones. 2018. Character-level language modeling with deeper self-attention. arXiv preprint arXiv:1808.04444.

- Rie Kubota Ando and Tong Zhang. 2005. A framework for learning predictive structures from multiple tasks and unlabeled data. Journal of Machine Learning Research, 6(Nov):1817–1853.

- Luisa Bentivogli, Bernardo Magnini, Ido Dagan, Hoa Trang Dang, and Danilo Giampiccolo. 2009. The fifth PASCAL recognizing textual entailment challenge. In TAC. NIST.

- John Blitzer, Ryan McDonald, and Fernando Pereira. 2006. Domain adaptation with structural correspondence learning. In: Proceedings of the 2006 conference on empirical methods in natural language processing, pages 120–128. Association for Computational Linguistics.

- Samuel R. Bowman, Gabor Angeli, Christopher Potts, and Christopher D. Manning. 2015. A large annotated corpus for learning natural language inference. In EMNLP. Association for Computational Linguistics.

- Peter F Brown, Peter V Desouza, Robert L. Mercer, Vincent J Della Pietra, and Jenifer C Lai. 1992. Class-based n-gram models of natural language. Computational linguistics, 18(4):467–479.

- Daniel Cer, Mona Diab, Eneko Agirre, Inigo Lopez- Gazpio, and Lucia Specia. 2017. Semeval-2017 task 1: Semantic textual similarity-multilingual and cross-lingual focused evaluation. arXiv preprint arXiv:1708.00055.

- Ciprian Chelba, Tomas Mikolov, Mike Schuster, Qi Ge, Thorsten Brants, Phillipp Koehn, and Tony Robinson. 2013. One billion word benchmark for measuring progress in statistical language modeling. arXiv preprint arXiv:1312.3005.

- Z. Chen, H. Zhang, X. Zhang, and L. Zhao. 2018. Quora question pairs.

- Kevin Clark, Minh-Thang Luong, Christopher D Manning, and Quoc V Le. 2018. Semi-supervised sequence modeling with cross-view training. arXiv preprint arXiv:1809.08370.

- Ronan Collobert and Jason Weston. 2008. A unified architecture for natural language processing: Deep neural networks with multitask learning. In: Proceedings of the 25th International Conference on Machine Learning, ICML ’08.

- Alexis Conneau, Douwe Kiela, Holger Schwenk, Lo¨�c Barrault, and Antoine Bordes. 2017. Supervised learning of universal sentence representations from natural language inference data. In: Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, pages 670–680, Copenhagen, Denmark. Association for Computational Linguistics.

- Andrew M. Dai and Quoc V Le. 2015. Semi-supervised sequence learning. In Advances in Neural Information Processing Systems, pages 3079–3087.

- J. Deng,W. Dong, R. Socher, L.-J. Li, K. Li, and L. Fei- Fei. 2009. ImageNet: A Large-Scale Hierarchical Image Database. In CVPR09.

- William B Dolan and Chris Brockett. 2005. Automatically constructing a corpus rof sentential paraphrases. In: Proceedings of the Third International Workshop on Paraphrasing (IWP2005).

- Dan Hendrycks and Kevin Gimpel. 2016. Bridging nonlinearities and stochastic regularizers with gaussian error linear units. CoRR, abs/1606.08415.

- Jeremy Howard and Sebastian Ruder. 2018. Universal language model fine-tuning for text classification. In ACL. Association for Computational Linguistics. Mandar Joshi, Eunsol Choi, Daniel S Weld, and Luke Zettlemoyer. 2017. Triviaqa: A large scale distantly supervised challenge dataset for reading comprehension. In ACL.

- Ryan Kiros, Yukun Zhu, Ruslan R Salakhutdinov, Richard Zemel, Raquel Urtasun, Antonio Torralba, and Sanja Fidler. 2015. Skip-thought vectors. In Advances in Neural Information Processing Systems, pages 3294–3302.

- Quoc Le and Tomas Mikolov. 2014. Distributed representations rof sentences and documents. In: Proceedings of The International Conference on Machine Learning, pages 1188–1196.

- Hector J Levesque, Ernest Davis, and Leora Morgenstern. 2011. The winograd schema challenge. In Aaai spring symposium: Logical formalizations of commonsense reasoning, volume 46, page 47.

- Lajanugen Logeswaran and Honglak Lee. 2018. An efficient framework for learning sentence representations. In: Proceedings of The International Conference on Learning Representations.

- Bryan McCann, James Bradbury, Caiming Xiong, and Richard Socher. 2017. Learned in translation: Contextualized word vectors. In NIPS.

- Tomas Mikolov, Ilya Sutskever, Kai Chen, Greg S Corrado, and Jeff Dean. 2013. Distributed representations of words and phrases and their compositionality. In Advances in Neural Information Processing Systems 26, pages 3111–3119. Curran Associates, Inc.

- Jeffrey Pennington, Richard Socher, and Christopher D. Manning. 2014. Glove: Global vectors for word representation. In Empirical Methods in Natural Language Processing (EMNLP), pages 1532– 1543.

- Matthew Peters, Waleed Ammar, Chandra Bhagavatula, and Russell Power. 2017. Semi-supervised sequence tagging with bidirectional language models. In ACL.

- Matthew Peters, Mark Neumann, Mohit Iyyer, Matt Gardner, Christopher Clark, Kenton Lee, and Luke Zettlemoyer. 2018. Deep contextualized word representations. In NAACL.

- Alec Radford, Karthik Narasimhan, Tim Salimans, and Ilya Sutskever. 2018. Improving language understanding with unsupervised learning. Technical report, OpenAI.

- Pranav Rajpurkar, Jian Zhang, Konstantin Lopyrev, and Percy Liang. 2016. Squad: 100,000+ questions for machine comprehension of text. arXiv preprint arXiv:1606.05250.

- Richard Socher, Alex Perelygin, Jean Wu, Jason Chuang, Christopher D Manning, Andrew Ng, and Christopher Potts. 2013. Recursive deep models for semantic compositionality over a sentiment treebank. In: Proceedings of the 2013 conference on empirical methods in natural language processing, pages 1631–1642.

- Wilson L Taylor. 1953. cloze procedure: A new tool for measuring readability. Journalism Bulletin, 30(4):415–433.

- Erik F Tjong Kim Sang and Fien De Meulder. 2003. Introduction to the conll-2003 shared task: Language-independent named entity recognition. In: Proceedings of the seventh conference on Natural language learning at HLT-NAACL 2003-Volume 4, pages 142–147. Association for Computational Linguistics. Joseph Turian, Lev Ratinov, and Yoshua Bengio. 2010.

- Word representations: A simple and general method fer semi-supervised learning. In: Proceedings of the 48th Annual Meeting of the Association for Computational Linguistics, ACL ’10, pages 384–394.

- Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Lukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in Neural Information Processing Systems, pages 6000–6010.

- Pascal Vincent, Hugo Larochelle, Yoshua Bengio, and Pierre-Antoine Manzagol. 2008. Extracting and composing robust features with denoising autoencoders. In: Proceedings of the 25th International Conference on Machine learning, pages 1096–1103. ACM

- Alex Wang, Amapreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel R Bowman. 2018. Glue: A multi-task benchmark and analysis platform for natural language understanding. arXiv preprint arXiv:1804.07461.

- A. Warstadt, A. Singh, and S. R. Bowman. 2018. Corpus of linguistic acceptability. Adina Williams, Nikita Nangia, and Samuel R Bowman. 2018. A broad-coverage challenge corpus for sentence understanding through inference. In NAACL.

- Yonghui Wu, Mike Schuster, Zhifeng Chen, Quoc V Le, Mohammad Norouzi, Wolfgang Macherey, Maxim Krikun, Yuan Cao, Qin Gao, Klaus Macherey, et al. 2016. Google’s neural machine translation system: Bridging the gap between human and machine translation. arXiv preprint arXiv:1609.08144.

- Jason Yosinski, Jeff Clune, Yoshua Bengio, and Hod Lipson. 2014. How transferable are features in deep neural networks? In Advances in Neural Information Processing Systems, pages 3320–3328.

- Rowan Zellers, Yonatan Bisk, Roy Schwartz, and Yejin Choi. 2018. Swag: A large-scale adversarial dataset for grounded commonsense inference. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing (EMNLP).

- Yukun Zhu, Ryan Kiros, Rich Zemel, Ruslan Salakhutdinov, Raquel Urtasun, Antonio Torralba, and Sanja Fidler. 2015. Aligning books and movies: Towards story-like visual explanations by watching movies and reading books. In: Proceedings of the IEEE International Conference on computer vision, pages 19–27.

;

| Author | volume | Date Value | title | type | journal | titleUrl | doi | note | year | |

|---|---|---|---|---|---|---|---|---|---|---|

| 2019 BERTPreTrainingofDeepBidirectio | Ming-Wei Chang Kristina Toutanova Jacob Devlin Kenton Lee | BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding | 2019 |