2019 LanguageModelsAreUnsupervisedMu

- (Radford et al., 2019) ⇒ Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, and Ilya Sutskever. (2019). “Language Models are Unsupervised Multitask Learners.” In: OpenAI Blog Journal, 1(8).

Subject Headings: GPT-2, WebText Dataset, GPT-2 Web Scraper, Byte Pair Encoding (BPE), Byte-level Language Model, Multi-Task Learning, In-Context Learning, Emergent LLM Property.

Notes

- It introduces the GPT-2 Transformer Model, a significant advancement in the field of Natural Language Processing (NLP).

- It explores a new approach to training models for a variety of NLP tasks, such as Translation, Inference, Classification, and Question-Answering, across diverse domains like news and encyclopedia text.

- It advocates for a combination of Transfer Learning (pre-training plus supervised fine-tuning) and Zero-Shot Learning using language models exclusively.

- It reports that this approach enabled GPT-2 to achieve commendable results in several NLP tasks and datasets in a zero-shot setting, including areas like Inference, Reading Comprehension, Common-Sense Reasoning, Question-Answering, Summarization, and Translation.

- It details the use of BytePair Encoding for tokenizing the corpus, which merges word-level inputs for frequent symbol sequences with character-level inputs for less frequent ones, and is based on Unicode points rather than bytes to expand the vocabulary.

- It describes modifications to the Transformer Decoder Architecture used in GPT-1, such as adjusting the Layer Normalization and adding an extra layer norm after the final self-attention block.

- It demonstrates significant improvements on small datasets like Penn Treebank and WikiText-2 and achieving state-of-the-art results on tasks such as the Children’s Book Test (CBT) and LAMBADA, while acknowledging challenges with larger datasets like the One Billion Word Benchmark.

- It notes that in summarization tasks, GPT-2's performance was modest, marginally outperforming a method that selected three random sentences from an article.

- It discusses ethical concerns regarding the release of a highly capable model like GPT-2, especially in light of its potential for misuse in generating realistic but potentially misleading text.

- It emphasizes the potential of unsupervised learning in language models, showcasing their ability to start learning various tasks without explicit supervision.

- It can be repoed at https://github.com/openai/gpt-2

- Supplemental Content:

Cited By

- Google Scholar ~ 459 Citations (Retrieved: 2019-10-27).

- Semantic Scholar ~ 1,053 Citations (Retrieved: 2019-10-27).

2020

- (Diao et al., 2020) ⇒ Shizhe Diao, Jiaxin Bai, Yan Song, Tong Zhang, and Yonggang Wang. (2020). “ZEN: Pre-training Chinese Text Encoder Enhanced by N-gram Representations.” In: Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: Findings.

- QUOTE: Pre-trained text encoders (Peters et al., 2018b; Devlin et al., 2018; Radford et al., 2018, 2019; Yang et al., 2019) have drawn much attention in natural language processing (NLP), because state-of-the-art performance can be obtained for many NLP tasks using such encoders. In general, these encoders are implemented by training a deep neural model on large unlabeled corpora.

2020

- (Brown et al., 2020) ⇒ Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, and Dario Amodei. (2020). “Language Models Are Few-Shot Learners.” In: Advances in Neural Information Processing Systems 33 (NeurIPS 2020).

- QUOTE: ... There are many approaches to building multi-task models. Giving task instructions in natural language was first formalized in a supervised setting with [ MKXS18 ] and used in [ RWC+19 ] for in-context learning and in [ RSR+19 ] for multi-task fine-tuning. ...

Quotes

Abstract

Natural language processing tasks, such as question answering, machine translation, reading comprehension, and summarization, are typically approached with supervised learning on task specific datasets. We demonstrate that language models begin to learn these tasks without any explicit supervision when trained on a new dataset of millions of webpages called WebText. When conditioned on a document plus questions, the answers generated by the language model reach 55 F1 on the CoQA dataset matching or exceeding the performance of 3 out of 4 baseline systems without using the 127,000 + training examples. The capacity of the language model is essential to the success of zero-shot task transfer and increasing it improves performance in a log-linear fashion across tasks. Our largest model, GPT-2, is a 1.5B parameter Transformer that achieves state of the art results on 7 out of 8 tested language modeling datasets in a zero-shot setting but still underfits WebText. Samples from the model reflect these improvements and contain coherent paragraphs of text. These findings suggest a promising path towards building language processing systems which learn to perform tasks from their naturally occurring demonstrations.

1. Introduction

Machine learning systems now excel (in expectation) at tasks they are trained for by using a combination of large datasets, high-capacity models, and supervised learning (Krizhevsky et a1., 2012, Sutskever et a1., 2014, Amodei et a1., 2016). Yet these systems are brittle and sensitive to slight changes in the data distribution (Recht et a1., 2018) and task specification (Kirkpatrick et a1., 2017). Current systems are better characterized as narrow experts rather than competent generalists. We would like to move towards more general systems Which can perform many tasks — eventually without the need to manually create and label a training dataset for each one.

The dominant approach to creating ML systems is to collect a dataset of training examples demonstrating correct behavior for a desired task, train a system to imitate these behaviors, and then test its performance on independent and identically distributed (IID) held—out examples. This has served well to make progress on narrow experts. But the often erratic behavior of captioning models (Lake et a1., 2017), reading comprehension systems (Jia & Liang, 2017), and image classifiers (Alcorn et a1., 2018) on the diversity and variety of possible inputs highlights some of the shortcomings of this approach.

Our suspicion is that the prevalence of single task training on single domain datasets is a major contributor to the lack of generalization observed in current systems. Progress towards robust systems with current architectures is likely to require training and measuring performance on a wide range of domains and tasks. Recently, several benchmarks have been proposed such as GLUE (Wang et a1., 2018) and decaNLP (McCann et al., 2018) to begin studying this. Multitask learning (Caruana, 1997) is a promising framework for improving general performance. However, multitask training in NLP is still nascent. Recent work reports modest performance improvements (Yogatama et a1., 2019) and the two most ambitious efforts to date have trained on a total of 10 and 17 (dataset, objective) pairs respectively (McCann et a1., 2018), Bowman et a1., 2018). From a meta-learning perspective, each (dataset, objective) pair is a single training example sampled from the distribution of datasets and objectives. Current ML systems need hundreds to thousands of examples to induce functions which generalize well. This suggests that multitask training many need just as many effective training pairs to realize its promise with current approaches. It will be very difficult to continue to scale the creation of datasets and the design of objectives to the degree that may be required to brute force our way there with current techniques. This motivates exploring additional setups for performing multitask learning.

The current best performing systems on language tasks utilize a combination of pre-training and supervised fine-tuning. This approach has a long history with a trend towards more flexible forms of transfer. First, word vectors were learned and used as inputs to task—specific architectures (Mikolov et al., 2013, Collobert et al., 2011), then the contextual representations of recurrent networks were transferred (Dai & Le, 2015, Peters et al., 2018), and recent work suggests that task-specific architectures are no longer necessary and transferring many self-attention blocks is sufficient (Radford et al., 2018, Devlin et al., 2018).

These methods still require supervised training in order to perform a task. When only minimal or no supervised data is available, another line of work has demonstrated the promise of language models to perform specific tasks, such as commonsense reasoning (Schwartz et al., 2017) and sentiment analysis (Radford et al., 2017).

In this paper, we connect these two lines of work and continue the trend of more general methods of transfer. We demonstrate language models can perform down-stream tasks in a zero-shot setting - without any parameter or architecture modification. We demonstrate this approach shows potential by highlighting the ability of language models to perform a wide range of tasks in a zero-shot setting. We achieve promising, competitive, and state-of-the-art results depending on the task.

2. Approach

At the core of our approach is language modeling. Language modeling is usually framed as unsupervised distribution estimation from a set of examples [math]\displaystyle{ (x_1, x_2,\cdots ,x_n) }[/math] each composed of variable length sequences of symbols [math]\displaystyle{ (s_1, s_2, \cdots, s_5) }[/math]. Since [language]] has a natural sequential ordering, it is common to factorize the joint probabilities over symbols as the product of conditional probabilities (Jelinek & Mercer, 1980, Bengio et al., 2003):

[math]\displaystyle{ p(x)=\displaystyle \prod_{i=1}^n p\left(s_n|s_1,\cdots,s_{n-1}\right) \quad \quad (1) }[/math]

This approach allows for tractable sampling from and estimation of [math]\displaystyle{ p(x) }[/math] as well as any conditionals of the form [math]\displaystyle{ p(s_{n-k},\cdots, s_n|s_1, \cdots, s_{n-k-1}) }[/math] In recent years, there have been significant improvements in the expressiveness of models that can compute these conditional probabilities, such as self-attention architectures like the Transformer (Vaswani et al., 2017).

Learning to perform a single task can be expressed in a probabilistic framework as estimating a conditional distribution p(output|input). Since a general system should be able to perform many different tasks, even for the same input, it should condition not only on the input but also on the task to be performed. That is, it should model p(output|input, task). This has been variously formalized in multitask and meta-learning settings. Task conditioning is often implemented at an architectural level, such as the task specific encoders and [[decoder]s in (Kaiser et al., 2017) or at an algorithmic level such as the inner and outer loop optimization framework of MAML (Finn et al., 2017). But as exemplified in McCann et al. (2018), language provides a flexible way to specify tasks, inputs, and outputs all as a sequence of symbols. For example, a translation training example can be written as the sequence (translate to french, english text, french text). Likewise, a reading comprehension training example can be written as (answer the question, document, question , answer). McCann et al. (2018) demonstrated it was possible to train a single model, the MQAN, to infer and perform many different tasks on examples with this type of format.

Language modeling is also able to, in principle, learn the tasks of McCann et al. (2018) without the need for explicit supervision of which symbols are the outputs to be predicted. Since the supervised objective is the same as the unsupervised objective but only evaluated on a subset of the sequence, the global minimum of the unsupervised objective is also the global minimumof the supervised objective. In this slightly toy setting, the concerns with density estimation as a principled training objective discussed in (Sutskever et al., 2015) are side stepped. The problem instead becomes whether we are able to, in practice, optimize the unsupervised objective to convergence. Preliminary experiments confirmed that sufficiently large language models are able to perform multitask learning in this toy—ish setup but learning is much slower than in explicitly supervised approaches.

While it is a large step from the well-posed setup described above to the messiness of “language in the wild”, Weston (2016) argues, in the context of dialog, for the need to develop systems capable of learning from natural language directly and demonstrated a proof of concept — learning a QA task without a reward signal by using forward prediction of a teacher’s outputs. While dialog is an attractive approach, we worry it is overly restrictive. The internet contains a vast amount of information that is passively available without the need for interactive communication. Our speculation is that a language model with sufficient capacity will begin to learn to infer and perform the tasks demonstrated in natural language sequences in order to better predict them, regardless of their method of procurement. If a [language model]] is able to do this it will be, in effect, performing unsupervised multitask learning. We test whether this is the case by analyzing the performance of language models in a zero-shot [[setting] on a wide variety of tasks.

2.1. Training Dataset

Most prior work trained language models on a single domain of text, such as news articles (Iozefowicz et al., 2016), Wikipedia (Merity et al., 2016), or fiction books (Kiros et al., 2015). Our approach motivates building as large and diverse a dataset as possible in order to collect natural language demonstrations of tasks in as varied of domains and contexts as possible.

A promising source of diverse and nearly unlimited text is web scrapes such as Common Crawl. While these archives are many orders of magnitude larger than current language modeling datasets, they have significant data quality issues. Trinh & Le (2018) used Common Crawl in their work on commonsense reasoning but noted a large amount of documents “whose content are mostly unintelligible”. We observed similar data issues in our initial experiments with Common Crawl. Trinh & Le (2018) ’s best results were achieved using a small subsarnple of Common Crawl which included only documents most similar to their target dataset, the Winograd Schema Challenge. While this is a pragmatic approach to improve performance on a specific task, we want to avoid making assumptions about the tasks to be performed ahead of time.

Instead, we created a new web scrape which emphasizes document quality. To do this we only scraped web pages which have been curated/filtered by humans. Manually filtering a full web scrape would be exceptionally expensive so as a starting point, we scraped all outbound links from Reddit, a social media platform, which received at least 3 karma. This can be thought of as a heuristic indicator for whether other users found the link interesting, educational, or just funny.

The resulting dataset, WebText, contains the text subset of these 45 million links. To extract the text from HTML responses we use a combination of the Dragnet (Peters & Lecocq, 2013) and Newspaper[1] content extractors. All results presented in this paper use a preliminary version of WebText which does not include links created after Dec 2017 and which after de—duplication and some heuristic based cleaning contains slightly over 8 million documents for a total of 40 GB of text. We removed all Wikipedia documents from WebText since it is a common data source for other datasets and could complicate analysis due to overlapping training data with test evaluation tasks.

| ”I’m not the cleverest man in the world, but like they say in French: Je ne suis pas un imbecile &%91;I’m not a fool]. |

| In a now—deleted post from Aug. 16, Soheil Eid, Tory candidate in the riding of Joliette, wrote in French: ”Mentez mentez, il en restera toujours quelque chose”, which translates as, ”Lie lie and something will always remain”. |

| “I hate the word “perfume", Burr says. ‘It’s somewhat better in French: “parfum". |

| If listened carefully at 29:55, a conversation can be heard between two guys in French: “-Comment on fait pour aller de l’autre coté? -Quel autre coté?”, which means “- How do you get to the other side? - What side?”. |

| If this sounds like a bit of a stretch, consider this question in French: As-tu aller au cinéma?, or Did you go to the movies?, which literally translates as Have—you to go to movies/theater? |

| “Brevet Sans Garantie Du Gouvernement”, translated to English: “Patented without government warranty”. |

2.2. Input Representation

A general language model (LM) should be able to compute the probability of (and also generate) any string. Current large scale LMs include pre-processing steps such as lowercasing, tokenization, and out-of-vocabulary tokens which restrict the space of modelable strings. While processing Unicode strings as a sequence of UTF-8 bytes elegantly fulfills this requirement as exemplified in work such as Gillick et al. (2015), current byte-level LMs are not competitive with word-level LMs on large scale datasets such as the One Billion Word Benchmark (Al-Rfou et al., 2018). We observed a similar performance gap in our own attempts to train standard byte-level LMs on WebTeXt.

Byte Pair Encoding (BPE) (Sennrich et al., 2015) is a practical middle ground between character and word level language modeling which effectively interpolates between word level inputs for frequent symbol sequences and character level inputs for infrequent symbol sequences. Despite its name, reference BPE implementations often operate on Unicode code points and not byte sequences. These implementations would require including the full space of Unicode symbols in order to model all Unicode strings. This would result in a base vocabulary of over 130,000 before any multi-symbol tokens are added. This is prohibitively large compared to the 32,000 to 64,000 token vocabularies often used with BPE. In contrast, a byte-level version of BPE only requires a base vocabulary of size 256. However, directly applying BPE to the byte sequence results in suboptimal merges due to BPE using a greedy frequency based heuristic for building the token vocabulary. We observed BPE including many versions of common words like dog since they occur in many variations such as dog., dog!, dog?. This results in a suboptimal allocation of limited vocabulary slots and model capacity. To avoid this, we prevent BPE from merging across character categories for any byte sequence. We add an exception for spaces which significantly improves the compression efficiency while adding only minimal fragmentation of words across multiple vocab tokens.

This input representation allows us to combine the empirical benefits of word-level LMs with the generality of byte-level approaches. Since our approach can assign a probability to any Unicode string, this allows us to evaluate our LMs on any dataset regardless of pre-processing, tokenization, or vocab size.

2.3. Model

We use a Transformer (Vaswani et al., 2017) based architecture for our LMs. The model largely follows the details of the OpenAI GPT model (Radford et al., 2018) with a few modifications. Layer normalization (Ba et al., 2016) was moved to the input of each sub-block, similar to a pre-activation residual network (He et al., 2016) and an additional layer normalization was added after the final self-attention block. A modified initialization which accounts for the accumulation on the residual path with model depth is used. We scale the weights of residual layers at initialization by a factor of [math]\displaystyle{ 1 /\sqrt{N} }[/math] where [math]\displaystyle{ N }[/math] is the number of residual layers. The vocabulary is expanded to 50,257. We also increase the context size from 512 to 1024 tokens and a larger batch size of 512 is used.

3. Experiments

We trained and benchmarked four LMs with approximately log-uniformly spaced sizes. The architectures are summarized in Table 2. The smallest model is equivalent to the original GPT, and the second smallest equivalent to the largest model from BERT (Devlin et al., 2018). Our largest model, which we call GPT-2, has over an order of magnitude more parameters than GPT. The learning rate of each model was manually tuned for the best perplexity on a 5% held-out sample of WebText. All models still underfit WebText and held-out perplexity has as of yet improved given more training time.

| Parameters | Layers | dmodel |

|---|---|---|

| 117M | 12 | 768 |

| 345M | 24 | 1024 |

| 762M | 36 | 1280 |

| 1542M | 48 | 1600 |

3.1. Language Modeling

As an initial step towards zero-shot task transfer, we are interested in understanding how WebText LM’s perform at zero-shot domain transfer on the primary task they are trained for language modeling. Since our model operates on a byte level and does not require lossy pre-processing or tokenization, we can evaluate it on any language model benchmark. Results on language modeling datasets are commonly reported in a quantity which is a scaled or exponentiated version of the average negative log probability per canonical prediction unit usually a character, a byte, or a word. We evaluate the same quantity by computing the log-probability of a dataset according to a WebTeXt LM and dividing by the number of canonical units. For many of these datasets, WebText LMs would be tested signicantly out-of-distribution, having to predict aggressively standardized text, tokenization artifacts such as disconnected punctuation and contractions, shuffled sentences, and even the string <UNK> which is extremely rare in WebText occurring only 26 times in 40 billion bytes. We report our main results in Table 3 using invertible de-tokenizers which remove as many of these tokenization/pre-processing artifacts as possible. Since these de-tokenizers are invertible, we can still calculate the log probability of a dataset and they can be thought of as a simple form of domain adaptation. We observe gains of 2.5 to 5 perplexity for GPT-2 with these de-tokenizers.

| LAMBADA (PPL) | LAMBADA (ACC) | CB T-CN (ACC) | CBT-NE (ACC) | WikiText2 (PPL) | PTB (PPL) | enwik8 (BPB) | text8 (BPC) | WikiText103 (PPL) | 1BW (PPL) | |

|---|---|---|---|---|---|---|---|---|---|---|

| SOTA | 99.8 | 59.23 | 85.7 | 82.3 | 39.14 | 46.54 | 0.99 | 1.08 | 18.3 | 21.8 |

| 117M | 35.13 | 45.99 | 87.65 | 83.4 | 29.41 | 65.85 | 1.16 | 1.17 | 37.50 | 75.20 |

| 345M | 15.60 | 55.48 | 92.35 | 87.1 | 22.76 | 47.33 | 1.01 | 1.06 | 26.37 | 55.72 |

| 762M | 10.87 | 60.12 | 93.45 | 88.0 | 19.93 | 40.31 | 0.97 | 1.02 | 22.05 | 44.575 |

| 1542M | 8.63 | 63.24 | 93.30 | 89.05 | 18.34 | 35.76 | 0.93 | 0.98 | 17.48 | 42.16 |

WebText LMs transfer well across domains and datasets, improving the state of the art on 7 out of the 8 datasets in a zero-shot setting. Large improvements are noticed on small datasets such as Penn Treebank and WikiTeXt-2 which have only 1 to 2 million training tokens. Large improvements are also noticed on datasets created to measure long-term dependencies like LAMBADA (Paperno et al., 2016) and the Children’s Book Test (Hill et al., 2015). Our model is still significantly worse than prior work on the One Billion Word Benchmark (Chelba et al., 2013). This is likely due to a combination of it being both the largest dataset and having some of the most destructive pre-processing - 1BW’s sentence level shuffling removes all long-range structure.

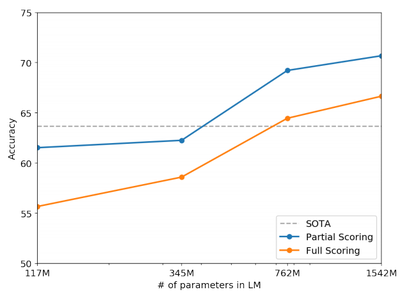

3.2. Children’s Book Test

The Children’s Book Test (CBT) (Hill et al., 2015) was created to examine the performance of LMs on different categories of words: named entities, nouns, verbs, and prepositions. Rather than reporting perplexity as an evaluation metric, CBT reports accuracy on an automatically constructed cloze test where the task is to predict which of 10 possible choices for an omitted word is correct. Following the LM approach introduced in the original paper, we compute the probability of each choice and the rest of the sentence conditioned on this choice according to the LM, and predict the one with the highest probability. As seen in Figure 2 performance steadily improves as model size is increased and closes the majority of the gap to human performance on this test. Data overlap analysis showed one of the CBT test set books, The Jungle Book by Rudyard Kipling, is in WebText, so we report results on the validation set which has no significant overlap. GPT-2 achieves new state of the art results of 93.3% on common nouns and 89.1% on named entities. A de-tokenizer was applied to remove PTB style tokenization artifacts from CBT.

3.3. LAMBADA

The LAMBADA dataset (Paperno et al., 2016) tests the ability of systems to model long-range dependencies in text. The task is to predict the final word of sentences which require at least 50 tokens of context for a human to successfully predict. GPT-2 improves the state of the art from 99.8 (Grave et al., 2016) to 8.6 perplexity and increases the accuracy of LMs on this test from 19% (Dehghani et al., 2018) to 52.66%. Investigating GPT-2’s errors showed most predictions are valid continuations of the sentence, but are not valid final words. This suggests that the LM is not using the additional useful constraint that the word must be the final of the sentence. Adding a stop-word filter as an approximation to this further increases accuracy to 63.24%, improving the overall state of the art on this task by 4%. The previous state of the art (Hoang et al., 2018) used a different restricted prediction setting where the outputs of the model were constrained to only words that appeared in the context. For GPT-2, this restriction is harmful rather than helpful since 19% of answers are not in context. We use a version of the dataset without preprocessing.

3.4. Winograd Schema Challenge

The Winograd Schema challenge (Levesque et al., 2012) was constructed to measure the capability of a system to perform commonsense reasoning by measuring its ability to resolve ambiguities in text. Recently Trinh & Le (2018) demonstrated significant progress on this challenge using LMs, by predicting the resolution of the ambiguity with higher probability. We follow their problem formulation and visualize the performance of our models with both full and partial scoring techniques in Figure 3. GPT-2 improves state of the art accuracy by 7%, achieving 70.70%. The dataset is quite small with only 273 examples so we recommend reading Trichelair et al. (2018) to help contextualize this result.

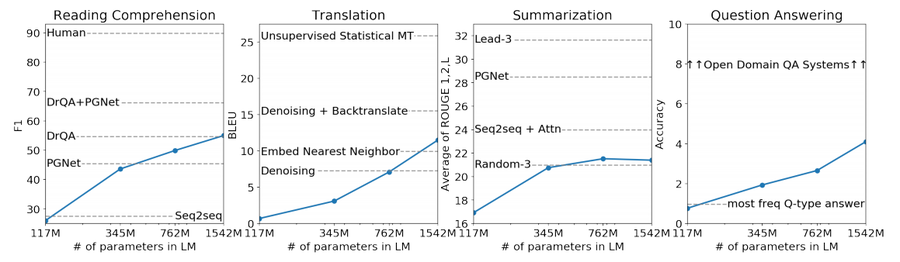

3.5. Reading Comprehension

The Conversation Question Answering dataset (CoQA) Reddy et al. (2018) consists of documents from 7 different domains paired with natural language dialogues between a question asker and a question answerer about the document. CoQA tests reading comprehension capabilities and also the ability of models to answer questions that depend on conversation history (such as “Why?”).

Greedy decoding from GPT-2 when conditioned on a document, the history of the associated conversation, and a final token A: achieves 55 F1 on the development set. This matches or exceeds the performance of 3 out of 4 baseline systems without using the 127,000 + manually collected question answer pairs those baselines were trained on. The supervised SOTA, a BERT based system (Devlin et al., 2018), is nearing the 89 F1 performance of humans. While GPT-2’s performance is exciting for a system without any supervised training, some inspection of its answers and errors suggests GPT-2 often uses simple retrieval based heuristics such as answer with a name from the document in response to a who question.

3.6. Summarization

We test GPT-2’s to perform summarization on the CNN and Daily Mail dataset (Nallapati et al., 2016). To induce summarization behavior we add the text TL; DR: after the article and generate 100 tokens with Top-k random sampling (Fan et al., 2018) with k: 2 which reduces repetition and encourages more abstractive summaries than greedy decoding. We use the first 3 generated sentences in these 100 tokens as the summary. While qualitatively the generations resemble summaries, as shown in Table 14, they often focus on recent content from the article or confuse specific details such as how many cars were involved in a crash or whether a logo was on a hat or shirt. On the commonly reported ROUGE 1, 2, L metrics the generated summaries only begin to approach the performance of classic neural baselines and just barely outperforms selecting 3 random sentences from the article. GPT-2’s performance drops by 6.4 points on the aggregate metric when the task hint is removed which demonstrates the ability to invoke task specific behavior in a language model with natural language.

| R-1 | R-2 | R-L | R—AVG | |

|---|---|---|---|---|

| Bottom-Up Sum | 41.22 | 18.68 | 38.34 | 32.75 |

| Lede-3 | 40.38 | 17.66 | 36.62 | 31.55 |

| Seq2Seq + Attn | 31.33 | 11.81 | 28.83 | 23.99 |

| GPT-2 TL; DR: | 29.34 | 8.27 | 26.58 | 21.40 |

| Random-3 | 28.78 | 8.63 | 25.52 | 20.98 |

| GPT—2 no hint | 21.58 | 4.03 | 19.47 | 15.03 |

3.7. Translation

We test whether GPT-2 has begun to learn how to translate from one language to another. In order to help it infer that this is the desired task, we condition the language model on a context of example pairs of the format english sentence = french sentence and then after a final prompt of english sentence: we sample from the model with greedy decoding and use the first generated sentence as the translation. On the WMT-14 English-French test set, GPT-2 gets 5 BLEU, which is slightly worse than a word-by-word substitution with a bilingual lexicon inferred in previous work on unsupervised word translation (Conneau et al., 2017b). On the WMT-14 French-English test set, GPT-2 is able to leverage its very strong English language model to perform significantly better, achieving 11.5 BLEU. This outperforms several unsupervised machine translation baselines from (Artetxe et al., 2017) and (Lample et al., 2017) but is still much worse than the 33.5 BLEU of the current best unsupervised machine translation approach (Artetxe et al., 2019). Performance on this task was surprising to us, since we deliberately removed non-English webpages from WebTeXt as a filtering step. In order to confirm this, we ran a byte-level language detector[2] on WebTeXt which detected only 10MB of data in the French language which is approximately 500x smaller than the monolingual French corpus common in prior unsupervised machine translation research.

3.8. Question Answering

A potential way to test what information is contained within a language model is to evaluate how often it generates the correct answer to factoid-style questions. Previous showcasing of this behavior in neural systems where all information is stored in parameters such as A Neural Conversational Model (Vinyals & Le, 2015) reported qualitative results due to the lack of high-quality evaluation datasets. The recently introduced Natural Questions dataset (Kwiatkowski et al., 2019) is a promising resource to test this more quantitatively. Similar to translation, the context of the language model is seeded with example question answer pairs which helps the model infer the short answer style of the dataset. GPT-2 answers 4.1% of questions correctly when evaluated by the exact match metric commonly used on reading comprehension datasets like SQUAD[3]. As a comparison point, the smallest model does not exceed the 1.0% accuracy of an incredibly simple baseline which returns the most common answer for each question type (who, what, where, etc...). GPT-2 answers 5.3 times more questions correctly, suggesting that model capacity has been a major factor in the poor performance of neural systems on this kind of task as of yet. The probability GPT-2 assigns to its generated answers is well calibrated and GPT-2 has an accuracy of 63.1% on the 1% of questions it is most confident in. The 30 most confident answers generated by GPT-2 on development set questions are shown in Table 5. The performance of GPT-2 is still much, much, worse than the 30 to 50% range of open domain question answering systems which hybridize information retrieval with extractive document question answering (Alberti et al., 2019).

| Question | Generated Answer | Correct | Probability |

|---|---|---|---|

| Who wrote the book the origin of species? | Charles Darwin | ✔ | 83.4% |

| Who is the founder of the ubuntu project? | Mark Shuttleworth | ✔ | 82.0% |

| Who is the quarterback for the green bay packers? | Aaron Rodgers | ✔ | 81.1% |

| Panda is a national animal of which country? | China | ✔ | 76.8% |

| Who came up with the theory of relativity? | Albert Einstein | ✔ | 76.4% |

| When was the first star wars film released? | 1977 | ✔ | 71.4% |

| What is the most common blood type in sweden? | A | ❌ | 70.6% |

| Who is regarded as the founder of psychoanalysis? | Sigmund Freud | ✔ | 69.3% |

| Who took the first steps on the moon in 1969? | Neil Armstrong | ✔ | 66.8% |

| Who is the largest supermarket chain in the uk? | Tesco | ✔ | 65.3% |

| What is the meaning of shalom in english? | peace | ✔ | 64.0% |

| Who was the author of the art of war? | Sun Tzu | ✔ | 59.6% |

| Largest state in the us by land mass? | California | ❌ | 59.2% |

| Green algae is an example of which type of reproduction? | parthenogenesis | ❌ | 56.5% |

| Vikram samvat calender is official in which country? | India | ✔ | 55.6% |

| Who is mostly responsible for writing the declaration of independence? | Thomas Jefferson | ✔ | 53.3% |

| What us state forms the western boundary of montana? | Montana | ❌ | 52.3% |

| Who plays ser davos in game of thrones? | Peter Dinklage | ❌ | 52.1% |

| Who appoints the chair of the federal reserve system? | Janet Yellen | ❌ | 51.5% |

| State the process that divides one nucleus into two genetically identical nuclei? | mitosis | ✔ | 50.7% |

| Who won the most mvp awards in the nba? | Michael Jordan | ❌ | 50.2% |

| What river is associated with the city of rome? | the Tiber | ✔ | 48.6% |

| Who is the first president to be impeached? | Andrew Johnson | ✔ | 48.3% |

| Who is the head of the department ofhomeland security 2017? | John Kelly | ✔ | 47.0% |

| What is the name given to the common currency to the european union? | Euro | ✔ | 46.8% |

| What was the emperor name in star wars? | Palpatine | ✔ | 46.5% |

| Do you have to have a gun permit to shoot at a range? | No | ✔ | 46.4% |

| Who proposed evolution in 1859 as the basis of biological development? | Charles Darwin | ✔ | 45.7% |

| Nuclear power plant that blew up in russia? | Chernobyl | ✔ | 45.7% |

| Who played john connor in the original terminator? | Arnold Schwarzenegger | ❌ | 45.2% |

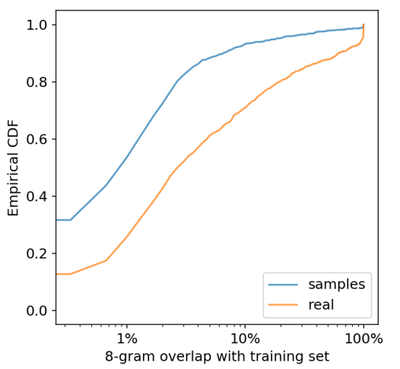

4. Generalization vs Memorization

Recent work in computer vision has shown that common image datasets contain a non-trivial amount of near-duplicate images. For instance CIFAR-lO has 3.3% overlap between train and test images (Barz & Denzler, 2019). This results in an over-reporting of the generalization performance of machine learning systems. As the size of datasets increases this issue becomes increasingly likely which suggests a similar phenomena could be happening with WebTeXt. Therefore it is important to analyze how much test data also shows up in the training data.

To study this we created Bloom filters containing 8-grams of WebText training set tokens. To improve recall, strings were normalized to contain only lower-cased alphanumeric words with a single space as a delimiter. The Bloom filters were constructed such that the false positive rate is upper bounded by $. We further verified the low false positive rate by generating 1M strings, of which zero were found by the filter.

Our approach optimizes for recall, and while manual inspection of the overlaps shows many common phrases, there are many longer matches that are due to duplicated data. This is not unique to WebTeXt. For instance, we discovered that the test set of WikiText-103 has an article which is also in the training dataset. Since there are only 60 articles in the test set there is at least an overlap of 1.6%[4]. Potentially more worryingly, lBW has an overlap of nearly 13.2% with its own training set according to our procedure.

For the Winograd Schema Challenge, we found only 10 schemata which had any 8-gram overlaps with the WebTeXt training set. Of these, 2 were spurious matches. Of the remaining 8, only 1 schema appeared in any contexts that gave away the answer.

For CoQA, about 15% of documents in the news domain are already in WebText and the model performs about 3 F1 better on these. CoQA’s development set metric reports the average performance over 5 different domains and we measure a gain of about 0.5-1.0 F1 due to overlap across the various domains. However, no actual training questions or answers are in WebTeXt since CoQA was released after the cutoff date for links in WebTeXt.

On LAMBADA, the average overlap is 1.2%. GPT-2 performs about 2 perplexity better on examples with greater than 15% overlap. Recalculating metrics when excluding all examples with any overlap shifts results from 8.6 to 8.7 perplexity and reduces accuracy from 63.2% to 62.9%. This very small change in overall results is likely due to only 1 in 200 examples having significant overlap.

Overall, our analysis suggests that data overlap between WebText training data and specific evaluation datasets provides a small but consistent benefit to reported results. However, for most datasets we do not notice significantly larger overlaps than those already existing between standard training and test sets, as Table 6 highlights.

Understanding and quantifying how highly similar text impacts performance is an important research question. Better de-duplication techniques such as scalable fuzzy matching could also help better answer these questions. For now, we recommend the use of n-gram overlap based de-duplication as an important verification step and sanity check during the creation of training and test splits for new NLP datasets.

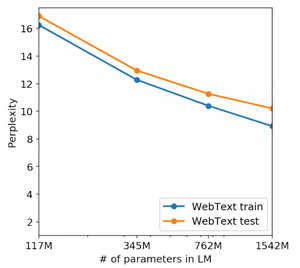

Another potential way of determining whether the performance of WebText LMs is attributable to memorization is inspecting their performance on their own held-out set. As shown in Figure 4, performance on both the training and test sets of WebText are similar and improve together as model size is increased. This suggests even GPT-2 is still underfitting on WebTeXt in many ways.

GPT-2 is also able to write news articles about the discovery of talking unicorns. An example is provided in Table 13. These Bloom filters let us calculate, given a dataset, the percentage of 8-grams from that dataset that are also found in the WebTeXt training set. Table 6 shows this overlap analysis for the test sets of common LM benchmarks. Common LM datasets' test sets have between 1-6% overlap with WebText train, with an average of overlap of 3.2%. Somewhat surprisingly, many datasets have larger overlaps with their own training splits, with an average of 5.9% overlap.

| PTB | WikiText-2 | enwik8 | text8 | Wikitext-103 | 1BW | |

|---|---|---|---|---|---|---|

| Dataset train | 2.67% | 0.66% | 7.50% | 2.34% | 9.09 % | 13.19% |

| WebText train | 0.88% | 1.63% | 6.31% | 3.94% | 2.42% | 3.75% |

Our approach optimizes for recall, and while manual inspection of the overlaps shows many common phrases, there are many longer matches that are due to duplicated data. This is not unique to WebTeXt. For instance, we discovered that the test set of WikiText-103 has an article which is also in the training dataset. Since there are only 60 articles in the test set there is at least an overlap of 1.6%[5]. Potentially more worryingly, lBW has an overlap of nearly 13.2% with its own training set according to our procedure.

For the Winograd Schema Challenge, we found only 10 schemata which had any 8-gram overlaps with the WebTeXt training set. Of these, 2 were spurious matches. Of the remaining 8, only 1 schema appeared in any contexts that gave away the answer.

For CoQA, about 15% of documents in the news domain are already in WebText and the model performs about 3 F1 better on these. CoQA’s development set metric reports the average performance over 5 different domains and we measure a gain of about 0.5-1.0 F1 due to overlap across the various domains. However, no actual training questions or answers are in WebTeXt since CoQA was released after the cutoff date for links in WebTeXt.

On LAMBADA, the average overlap is 1.2%. GPT-2 performs about 2 perplexity better on examples with greater than 15% overlap. Recalculating metrics when excluding all examples with any overlap shifts results from 8.6 to 8.7 perplexity and reduces accuracy from 63.2% to 62.9%. This very small change in overall results is likely due to only 1 in 200 examples having significant overlap.

Overall, our analysis suggests that data overlap between WebText training data and specific evaluation datasets provides a small but consistent benefit to reported results. However, for most datasets we do not notice significantly larger overlaps than those already existing between standard training and test sets, as Table 6 highlights.

Understanding and quantifying how highly similar text impacts performance is an important research question. Better de-duplication techniques such as scalable fuzzy matching could also help better answer these questions. For now, we recommend the use of n-gram overlap based de-duplication as an important verification step and sanity check during the creation of training and test splits for new NLP datasets.

Another potential way of determining whether the performance of WebText LMs is attributable to memorization is inspecting their performance on their own held-out set. As shown in Figure 4, performance on both the training and test sets of WebText are similar and improve together as model size is increased. This suggests even GPT-2 is still underfitting on WebTeXt in many ways.

GPT-2 is also able to write news articles about the discovery of talking unicorns. An example is provided in Table 13.

5. Related Work

A significant portion of this work measured the performance of larger language models trained on larger datasets. This is similar to the work of Jozefowicz et al. (2016) which scaled RNN based language models on the 1 Billion Word Benchmark. Bajgar et al. (2016) also previously improved results on the Children’s Book Test by creating a much larger training dataset out of Project Gutenberg to supplement the standard training dataset. Hestness et al. (2017) conducted a thorough analysis of how the performance of various deep learning models changes as a function of both model capacity and dataset size. Our experiments, while much noisier across tasks, suggest similar trends hold for sub-tasks of an objective and continue into the 1B + parameter regime.

Interesting learned functionality in generative models has been documented before such as the cells in an RNN language model performing line-width tracking and quote/comment detection Karpathy et al. (2015). More inspirational to our work was the observation of Liu et al. (2018) that a model trained to generate Wikipedia articles also learned to translate names between languages.

Previous work has explored alternative approaches to filtering and constructing a large text corpus of web pages, such as the iWeb Corpus (Davies, 2018).

There has been extensive work on pre-training methods for language tasks. In addition to those mentioned in the introduction, GloVe (Pennington et al., 2014) scaled word vector representation learning to all of Common Crawl. An influential early work on deep representation learning for text was Skip-thought Vectors (Kiros et al., 2015). McCann et al. (2017) explored the use of representations derived from machine translation models and Howard & Ruder (2018) improved the RNN based fine-tuning approaches of (Dai & Le, 2015). (Conneau et al., 2017a) studied the transfer performance of representations learned by natural language inference models and (Subramanian et al., 2018) explored large-scale multitask training.

(Rarnachandran et al., 2016) demonstrated that seq2seq models benefit from being initialized with pre-trained language models as encoders and decoders. More recent work has shown that LM pre-training is helpful when fine-tuned for difficult generation tasks like chit-chat dialog and dialog based question answering systems as well (Wolf et al., 2019) (Dinan et al., 2018).

6. Discussion

Much research has been dedicated to learning (Hill et al., 2016), understanding (Levy & Goldberg, 2014), and critically evaluating (Wieting & Kiela, 2019) the representations of both supervised and unsupervised pre-training methods. Our results suggest that unsupervised task learning is an additional promising area of research to explore. These findings potentially help explain the widespread success of pre-training techniques for down-stream NLP tasks as we show that, in the limit, one of these pre-training techniques begins to learn to perform tasks directly without the need for supervised adaption or modification.

On reading comprehension the performance of GPT-2 is competitive with supervised baselines in a zero-shot setting. However, on other tasks such as summarization, while it is qualitatively performing the task, its performance is still only rudimentary according to quantitative metrics. While suggestive as a research result, in terms of practical applications, the zero-shot performance of GPT-2 is still far from use-able.

We have studied the zero-shot performance of WebText LMs on many canonical NLP tasks, but there are many additional tasks that could be evaluated. There are undoubtedly many practical tasks where the performance of GPT-2 is still no better than random. Even on common tasks that we evaluated on, such as question answering and translation, language models only begin to outperform trivial baselines when they have sufficient capacity.

While zero-shot performance establishes a baseline of the potential performance of GPT-2 on many tasks, it is not clear where the ceiling is with fine-tuning. On some tasks, GPT-2’s fully abstractive output is a significant departure from the extractive pointer network (Vinyals et al., 2015) based outputs which are currently state of the art on many question answering and reading comprehension datasets. Given the prior success of fine-tuning GPT, we plan to investigate fine-tuning on benchmarks such as decaNLP and GLUE, especially since it is unclear whether the additional training data and capacity of GPT-2 is sufficient to overcome the inefficiencies of uni-directional representations demonstrated by BERT (Devlin et al., 2018).

7. Conclusion

When a large language model is trained on a sufficiently large and diverse dataset it is able to perform well across many domains and datasets. GPT-2 zero—shots to state-of-the-art performance on 7 out of 8 tested language modeling datasets. The diversity of tasks the model is able to perform in a zero-shot setting suggests that high-capacity models trained to maximize the likelihood of a sufficiently varied text corpus begin to learn how to perform a surprising amount of tasks without the need for explicit supervision[6].

Acknowledgements

Thanks to everyone who wrote the text, shared the links, and upvoted the content in WebText. Many millions of people were involved in creating the data that GPT-2 was trained on. Also thanks to all the Googlers who helped us with training infrastructure, including Zak Stone, J S Riehl, Jonathan Hseu, Russell Power, Youlong Cheng, Noam Shazeer, Solomon Boulos, Michael Banfield, Aman Gupta, Daniel Sohn, and many more. Finally thanks to the people who gave feedback on drafts of the paper: Jacob Steinhardt, Sam Bowman, Geoffrey Irving, and Madison May.

8. Appendix A: Samples

8.1. Model capacity

To complement the reported perplexity gains of bigger LMs on WebText show in Figure 4, Tables 7 through 11 show side-by-side completions of the smallest WebText LM and GPT-2 on random unseen WebText test set articles.

8.2. Text Memorization

We observe some memorizing behavior in GPT-2 on longer strings that are repeated many times in the dataset such as famous quotes or speeches. For example, when conditioned on the first sentence and a half of the Gettysburg Address (which occurs approximately 40 times throughout WebText), an argmax decode from GPT-2 recovers the speech. Even when sampling without truncation, we find that the model copies the speech for awhile before drifting, albeit in a similar style. It typically drifts within 100-200 tokens, and displays widening diversity once it drifts.

To quantify how often exact memorization shows up in samples, we generated samples from GPT-2 conditioned on WebText test set articles and compared the overlap rates of GPT-2’s generations to the overlap rates of the ground-truth completions. The results of this analysis are shown below and suggest that GPT-2 repeats text from the training set less often then the baseline rate of held-out articles.

8.3. Diversity

Table 12 shows multiple completions of the same random WebText test set context, showing the diversity of completions with standard sampling settings.

8.4. Robustness

Table 13 shows the previously mentioned talking unicoms news article. We find the model to be capable of handling out of distribution contexts, but the quality of these samples is generally lower.

| Context (WebText test) | |

|---|---|

| Outfit 1: Typical

| |

| Small model completion | GPT-2 completion |

| .

|

for me!

This is the final outfit I wore the ASICS Tiger Killshots. This version of the ASICS is a much more modern and streamlined version. The shoe has the most modern and minimal design that I can think of! With a lot of black, these sneakers look very cool. They’ll definitely be very comfortable for summer.

|

| Context (WebText test) | |

|---|---|

| Some of the most glorious historical attractions in Spain date from the period of Muslim rule, including The Mezquita, built as the Great Mosque of Cordoba and the Medina Azahara, also in Cordoba and now in ruins but still Visitable as such and built as the Madinat al-Zahra, the Palace of al-Andalus; and the Alhambra in Granada, a splendid, intact palace. There are also two synagogues still standing that were built during the era of Muslim Spain: Santa Maria la Blanca in Toledo and the Synagogue of Cordoba, in the Old City. Reconquista and Imperial era

Reconquista and Imperial era [ edit] | |

| Small model completion | GPT-2 completion |

| The era of Muslim Spain is very different from that of other parts of the world, with a rather extensive medieval history. While the Spanish Empire existed during the second half of the 9th century BC it was quite slowly transformed to a small, secularized empire by 1150 AD, in a much more prosperous and diverse state. In 1050 CE the emperor of Naples, at least partially in characterised by a series of wars and invasions from the north-western coast to the western coast of the region (e.g., on the Danube River which extended all the way from Gibraltar into the south of Spain, but was quickly destroyed on the same day by the Roman army), assumed the title of Emperor of Naples. He made a large contribution over the last few decades to restore and maintain the empire, the first of which to the west. His successors were Ferdinand, who took power from the Papal Throne in 1148, while the first imperial successor was King Alfred II of Sweden. The empire was again made one of the few independent states in the European Continent and although no more than 200 million people lived on its territory during this period the people had much to say to the Emperor. From 1720 on the Muslims made up half the population of Spain. | The Reconquista (literally, ”The return to the country”) of 1092 and 1095 saw the establishment in the Iberian peninsula of a united kingdom of Castile and Aragon in which a Muslim dynasty would exist. This was the last time in Europe where Muslim rule would be considered ”native” and the last occasion where Christian-inflamed and organized conflicts would make themselves known in Europe.

|

| Context (WebText test) | |

|---|---|

| Pinky

| |

| Small model completion | GPT-2 completion |

| was coming to the left, they would get the ”correct” direction.

|

was about to cross the intersection, Pac-Man would be able to dodge the ghosts’ projectiles and return to the safe location of the safe house.

|

| Context (WebText test) | |

|---|---|

| Corporal Michael P. Goeldin was an unskilled laborer from Ireland when he enlisted in Company A in November 1860. Goldein survived the war. Corporal Patrick O’Neal, also from Ireland, first enlisted in 1854 and served with Company L, 3d U.S. Artillery, in Oregon. He returned to the East Coast and enlisted in the company in 1860. O’Neal served until 1874, when he was named superintendent of the National Cemetery at Willets Point, New York. Corporal Benjamin Browne was a shoemaker from Orange County, New York. In August 1862, he enlisted in the newly formed 124th New York Volunteers, and was one of sixty-one men who transferred into Company A that October. Browne reenlisted in the company in February 1864 while it was camped at Brandy Station. He returned to civilian life after completing his enlistment in 1867.

| |

| Small model completion | GPT-2 completion |

| on military charges and was discharged from the US. Military. He returned to the company, and was assigned to Division of Internal Affairs during the Civil War.

|style="vertical-align:top;width:50%"|in June of that year. Sentenced to be shot for desertion, he remained on active duty.

|- |

|

| Context (WebText test) | |||

|---|---|---|---|

Xmonad,

|- |

Small model completion | GPT-2 completion | |

was set to

|style="vertical-align:top;width:50%"| , making these files read-only. I tried just starting ssh and using a program like

|- |

|||

| Context (WebText test) | |||||

|---|---|---|---|---|---|

|

Aaron loves mint chocolate cake, but he requires that it be paired with mini chocolate chips, so I threw some of those in between the layers. I also had a few Peppermint Jo Jos on hand so I crushed them up and threw some of those in along with some crushed meringue cookies because, why not? It’s a total smorgasbord of minty chocolate chippy cookie crunchy goodness. I didn’t measure how much of each topping I used, but after I tasted the finished product, I wish I had added more. You can add anything you wantcrushed candy canes, peppermint bark, etc. And don’t be afraid to use a heavy hand. Texture = good.

|- |

GPT-2 Completion 1 | GPT-2 Completion 2 | GPT-2 Completion 3 | ||

recipe. I made the longer version, then used the pan for a layer of buttercream frosting and stuck 1/3 of it into the fridge for a frosting topper. Just make sure you have all of this frosting prepared earlier than you make this cake and you won’t have to wait on it as your egg whites sit on the bottom of your mixing bowl.

|style="vertical-align:top; with=30%"| recipe. If you do, and you know how to make that cake, then your life will never be the same.

|style="vertical-align:top; with=30%"| recipe, which will likely yield about the same amount of cake.

1 1/2 cup granulated sugar 3 large eggs plus 1 egg yolk For the chocolate glaze 1/3 cup cocoa powder 1 3/4 cups powdered sugar 6 ounces semis |- |

GPT-2 Completion 4 | GPT-2 Completion 5 | GPT-2 Completion 6 | ||

recipe.

1 cup sugar 1 egg yolk 3 T. heavy cream 3 1/2-4 cups flour pinch of salt Peppermint-Jojo Topping 1 1/4 cups powdered sugar 1 cup chopped pecans 1/2 cup finely chopped mint leaves 1/2 cup chopped fresh mint (about 1/2 sheet) 1 tsp. peppermint extract 1/4 tsp. sea salt pinch of sea

|

recipe.

|style="vertical-align:top; with=30%"| recipe, which will give you about 6-9 servings of delicious chocolate cake. To prevent your layers from sticking together, I used my 8-inch round tart pan, lined it with parchment paper, then cut up 4 7-inch round cakes from it and sandwiched the layers between them (this also worked well for the 1-layer chocolate cake as well). When you’re ready to bake this cake, remove the parchment paper, preheat oven to 350 degrees and bake for about 30-40 minutes, until the top is golden and a tester comes out clean.

|- |

||||

| Context (human-written): In a shocking finding, scientist discovered a herd of unicoms living

in a remote, previously unexplored valley, in the Andes Mountains. Even more surprising to the researchers was the fact that the unicoms spoke perfect English. |

GPT-2: The scientist named the population, after their distinctive horn, Ovid’s Unicorn. These four-horned, silver-white unicorns were previously unknown to science.

|

Article: Prehistoric man sketched an incredible array of prehistoric beasts on the rough limestone walls of a cave in modern day France 36,000 years ago. Now, with the help of cutting-edge technology, those works of art in the Chauvet-Pont-d’Arc Cave have been reproduced to create the biggest replica cave in the world. The manmade cavern named the Caverne du Pont-d’Arc has been built a few miles from the original site in Vallon-Pont-D’arc in Southern France and contains 1,000 painstakingly-reproduced drawings as well as around 450 bones and other features... Cavemen and women sketched an incredible array of prehistoric beasts on the rough limestone walls of a cave 36,000 years ago and now a replica has been created (pictured) ... |- |

GPT-2: The original site in Vallon-Pont-D’arc in Southern France is a Unesco World Heritage site and is the oldest known and the best preserved cave decorated by man. The replica cave was built a few miles from the original site in Vallon-Pont-D’Arc in Southern France. The cave contains images of 14 different species of animals including woolly rhinoceros, mammoths, and big cats. |style="width:50%"| Reference: Cave mimics famous Caverne du Pont-d’Arc in France, the oldest cave decorated by man and the best preserved. The replica contains all 1,000 paintings which include 425 such as a woolly rhinoceros and mammoths. Minute details were copied using 3D modelling and anamorphic techniques, often used to shoot widescreen images. The modern cave also includes replica paw prints of bears, bones and details preserved in the original cave. |- |

Article: ‘If you can find my submarine, it’s yours,’ Russian oil billionaire Roman Abramovich once said. And it seems the oligarch and Chelsea FC owner, whose submarine is just one of the extras that came with his £300million superyacht Eclipse (perfect for getting to shore undetected), is not the only wealthy businessman splashing out on underwater exploration. Dubbed Earth’s real ‘final frontier’, the oceans are still so little-explored that billionaires are queuing up to buy vessels that give them a glimpse of the dramatic seascapes and incredible wildlife of the world’s oceans. So if you have a spare few million in the bank and want some holiday snaps and Instagram posts that will really trump everyone else, you may want to snap up one of these... Whale of a time: The OrcaSub takes you 2000 feet beneath the surface in two pressurised Perspex Viewing domes for optimum exploration ... |- |

GPT-2: The U-Boat Worx submarine can dive to 984 feet (300 metres) and is capable of diving to the bottom of the ocean. It can be used for diVing, snorkelling and even snorkel diving. The submarine is also equipped with a snorkelling platform. |style="width:50%"| Reference: Feel like a James Bond Villain with the most advanced submerged ocean vehicles. Some convert from a yacht into a submarine, and others detached off for a speedy exploration. Oliver’s Travels offers a Mile Low package on its submarine, complete with chef and butler for £175,000 a night. |- |

Article: Amina Ali Qassim is sitting with her youngest grandchild on her lap, wiping away tears with her headscarf. Only a few months old, this is the baby girl whose ears she desperately tried to cover the night the aerial bombardment started. She lay awake, she says, in a Village mosque on the Yemeni island of Birim, counting explosions as the baby cried... It could have been worse though. They could have still been in their house when the first missile landed. ”Our neighbor shouted to my husband ’you have to leave, they’re coming.’ And we just ran. As soon as we left the house, the first missile fell right by it and then a second on it. It burned everything to the ground,” Qassim tells us ... |- |

GPT-2: Yemen is in the middle of a civil war. Saudi Arabia is leading the coalition bombing campaign. It’s been bombing Yemen for more than two months now. |style="width:50%"| Reference: Amina Ali Qassim’s family sought shelter in a mosque before fleeing Yemen. Thousands like them are boarding boats to sail to Djibouti. Saudi Arabia has been pounding Yemen in a bid to defeat Houthi rebels. |- |

|||

| English reference | GPT-2 French translation | |

|---|---|---|

This re-release, titled The Next Day Extra, was presented in the form of three disks: the original album, unpublished studio sessions and remixes, plus a DVD containing the four clips that have already been unveiled. |style="width:50%; vertical-align:top"| Les nouvelles re-releases, tout en premier disc, nécessaire de l'album, un studio session et remixes, plus une DVD de l'écran de quelques clips qui ont été déja échappés. |- |

French reference | GPT-2 English translation |

Un homme expliquait que le fonctionnement de la hernia fonctionnelle qu'il avait reconnait avant de faire, le fonctionnement de la hernia fonctionnelle que j'ai reussi, j'ai réussi. |

This release, originally released on three discs: the original album, sessions studio inedites and remixes, a DVD containing the four clips that were released on the previous day. |- |

|

| English reference | GPT-2 French translation | |

One man explained that the free hernia surgery he'd received will allow him to work again. |style="width:50%; vertical-align:top"| Un homme expliquait que le fonctionnement de la hernia fonctionnelle qu'il avait reconnait avant de faire, le fonctionnement de la hernia fonctionnelle que j'ai réussi, j'ai réussi. |- |

French reference | GPT-2 English translation |

Un home a expliqué que l'opération gratuite qu'il avait subie pour soigner une hernie lui permettrait de travailler a nouveau. |style="width:50%; vertical-align:top"| A man told me that the operation gratuity he had been promised would not allow him to travel. |- |

||

| English reference | GPT-2 French translation | |

Speaking to an open government conference in London Via Video link, Mr Kerry said: ”There is no question that the President andI and others in government have actually learned of some things that had been happening on an automatic pilot because the ability has been there, going back to World War Two and to the very difficult years of the Cold War, and then, of course, 9/11.” |style="width:50%; vertical-align:top"| Le premier conference de l'édition internationale en ligne sur la Vide, le ministre de l'Edition internationale a ete Vide, lui dit : ”Il n'y a qu'une question que le president et moi et tous les autres personnes d'une administration qui a été evident par la fois, par la part de la guerre, par la part de la guer |- |

French reference | GPT-2 English translation |

S'exprimant lors d'une conference inter-gouvernementale a Londres par liaison Video, M. Kerry a declare: ”Il est indeniable que le President, moi-meme et d'autres membres du gouvemement avons pris connaissance de certaines choses en mode pilote automatique parce que nous en avions la possibilité, dies la Seconde guerre mondiale et jusqu'aux annees difficiles de la Guerre froide, puis bien sfir le 11 septembre.” |style="width:50%; vertical-align:top"| In a conference between the United States and London, Secretary of State John Kerry said: ”It is indeniable that the President, myself and others of the government have been aware of certain certain choices that have been made in the past in order to be able to do certain things in a more automated way.” |- |

| Context (passage and previous question/answer pairs) | ||

|---|---|---|

The 2008 Summer Olympics torch relay was run from March 24 until August 8, 2008, prior to the 2008 Summer Olympics, with the theme of “one world, one dream”. Plans for the relay were announced on April 26, 2007, in Beijing, China. The relay, also called by the organizers as the “Journey of Harmony”, lasted 129 days and carried the torch 137,000 km (85,000 mi) - the longest distance of any Olympic torch relay since the tradition was started ahead of the 1936 Summer Olympics.

|- |

Q: What was the theme A: “one world, one dream”.

Q: What was the length of the race? A: 137,000 km

Q: Was it larger than previous ones? A: No

Q: Where did the race begin? A: Olympia, Greece

Q: Is there anything notable about that place? A: birthplace of Olympic Games

Q: Where did they go after? A: Athens

Q: How many days was the race? A: seven

Q: Did they Visit any notable landmarks? A: Panathinaiko Stadium

Q: And did they climb any mountains? A: |- |

Model answer: Everest Turker answers: unknown, yes, Yes, yes |- |

| Context (passage and previous question/answer pairs) | |

|---|---|

Tom goes everywhere with Catherine Green, a 54-year-old secretary. He moves around her office at work and goes shopping with her. ”Most people don’t seem to mind Tom,” says Catherine, who thinks he is wonderful. ”He’s my fourth child,” she says. She may think of him and treat him that way as her son. He moves around buying his food, paying his health bills and his taxes, but in fact Tom is a dog.

| |

Q: How old is Catherine? A: 54

Q: where does she live? A: |- |

Model answer: Stockholm Turrker answers: Sweden, Sweden, in Sweden, Sweden |- |

Footnotes

- ↑ https://github.com/codelucas/newspaper

- ↑ https://github.com/CLDZOwners/cle

- ↑ Alec, who previously thought of himself as good at random trivia, answered 17 of 100 randomly sampled examples correctly when tested in the same setting as GPT-2. He actually only got 14 right but he should have gotten those other 3.

- ↑ A significant portion of additional overlap is due to editors reusing some paragraphs across multiple articles with a shared theme such as various battles in the Korean War.

- ↑ A significant portion of additional overlap is due to editors reusing some paragraphs across multiple articles with a shared theme such as various battles in the Korean War.

- ↑ Preliminary code for downloading and using the small model is available at https://github.com/openai/gpt-2

References

- (Alberti et al., 2019) ⇒ Alberti, C., Lee, K., and Collins, M. A bert baseline for the natural questions. arXiv preprint arXiv:1901.08634, 2019.

- (Artetxe et al., 2019) ⇒ Artetxe, M., Labaka, G., and Agirre, E. An effective approach to unsupervised rnachine translation. arXiv preprint arXiv:1902.01313, 2019.

- (Dai et al., 2019) ⇒ Dai, Z., Yang, Z., Yang, Y., Cohen, W. W., Carbonell, J., Le, Q. V., and Salakhutdinov, R. Transforrner-xl: Attentive language rnodels beyond a fixed-length context. arXiv preprint arXiv:1901.02860, 2019.

- (Earl & Denzler, 2019) ⇒ Earl, B. and Denzler, J. Do we train on test data? purging cifar of near-duplicates. arXiv preprint arXivJ 902. 00423 , 2019.

- (Kwiatkowski et al., 2019) ⇒ Tom Kwiatkowski, Jennimaria Palomaki, Olivia Redfield, Michael Collins, Ankur P. Parikh, Chris Alberti, Danielle Epstein, Illia Polosukhin, Jacob Devlin, Kenton Lee, Kristina Toutanova, Llion Jones, Matthew Kelcey, Ming-Wei Chang, Andrew M. Dai, Jakob Uszkoreit, Quoc Le, and Slav Petrov. (2019). “Natural Questions: A Benchmark for Question Answering Research.” In: Transactions of the Association for Computational Linguistics, 7.

- (Wieting et al., 2019) ⇒ Wieting, J. and Kiela, D. No training required: Exploring random encoders for sentence classification. arXiv preprint arXiv:1901.10444, 2019.

- (Wolf et al.,2 019) ⇒ Wolf, T., Sanh, V., Chaurnond, J., and Delangue, C. Transfertransfo: A transfer learning approach for neural network based conversational agents. arXiv preprint arXiv:1901.08I49, 2019.

- (Yogatama et al., 2019) ⇒ Yogatama, D., d’Auturne, C. d. M., Connor, J., Kocisky, T., Chrzanowski, M., Kong, L., Lazaridou, A., Ling, W., Yu, L., Dyer, C., et 3.1. Learning and evaluating general linguistic intelligence. arXiv preprint arXiv:1901.11373, 2019.

2018a

- (Alcorn et al., 2018) ⇒ Alcorn, M. A., Li, Q., Gong, Z., Wang, C., Maj, L., Ku, W.-S., and Nguyen, A. Strike (with) a pose: Neural networks are easily fooled by strange poses of familiar objects. arXiv preprint arXiv:1811.11553, 2018.

2018b

- (Al-Rfou et al., 2019) ⇒ Rami Al-Rfou, Dokook Choe, Noah Constant, Mandy Guo, and Llion Jones. (2019). “Character-level Language Modeling with Deeper Self-attention.” In: Proceedings of the AAAI Conference on Artificial Intelligence.

2018c

- (Bowman et al.,2018) ⇒ Bowman, S. R., Pavlick, E., Grave, E., Van Durrne, B., Wang, A., Hula, J., Xia, R, Pappagari, R., McCoy, R. T., Patel, R., et a1. Looking for elrno’s friends: Sentence-level pretraining beyond language modeling. arXiv preprint arXiv:1812.10860, 2018.

2018d

- (Radford et al., 2018b) ⇒ Radford, A., Narasimhan, K., Salirnans, T., and Sutskever, I. Improving language understanding by generative pre-training. 2018.

2018e

- (Davies,2018) ⇒ Davies, M. The 14 billion https.'//corpus.byu.edu/iWeb/, 2018. word iweb corpus.

2018f

- (Dehghani et al., 2018) ⇒ Dehghani, M., Gouws, S., Vinyals, 0., Uszkoreit, J., and Kaiser, L. Universal transformers. arXiv preprint arXiv:1807. 03819, 2018.

2018g

- (Devlin et al., 2018) ⇒ Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. (2019). “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.” In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2019), Volume 1 (Long and Short Papers). DOI:10.18653/v1/N19-1423. arXiv:1810.04805

2018h

- (Dinan,2018) ⇒ Dinan, E., Roller, S., Shuster, K., Fan, A., Auli, M., and Weston, J. Wizard of Wikipedia: Knowledge-powered conversational agents. arXiv preprint arXiv:1811.0124], 2018.

2018i

- (Fan et al., 2018) ⇒ Fan, A., Lewis, M., and Dauphin, Y. Hierarchical neural story generation. arXiv preprint arXiv:1805.04833, 2018.

2018j

- (Gehrmann et al., 2018) ⇒ Gehrmann, S., Deng, Y., and Rush, A. M. Bottom-up abstractive summarization. arXiv preprint arXiv:1808.10792, 2018.

2018k

- (Gong et al., 2018) ⇒ Gong, C., He, D., Tan, X., Qin, T., Wang, L., and Liu, T.-Y. Frage: frequency-agnostic word representation. In Advances in Neural Information Processing Systems, pp. 1341-1352, 2018.

2018l

- (Hoang et al., 2018) ⇒ Hoang, L., Wisernan, S., and Rush, A. M. Entity tracking improves cloze-style reading comprehension. arXiv preprint arXiv:1810.02891,2018.

2018m

- (Howard & Ruder, 2018) ⇒ Howard, J . and Ruder, S. Universal language model fine-tuning for text classification. In: Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume I.’ Long Papers), volume 1, pp. 328-339, 2018.

2018n

- (Liu et al., 2018) ⇒ Peter J. Liu, Mohammad Saleh, Etienne Pot, Ben Goodrich, Ryan Sepassi, Lukasz Kaiser, and Noam Shazeer. (2018). “Generating Wikipedia by Summarizing Long Sequences.” In: Proceedings of the Sixth International Conference on Learning Representations (ICLR-2018).

2018o

- (McCann et al., 2018) ⇒ McCann, B., Keskar, N. S., Xiong, C., and Socher, R. The natural language decathlon: Multitask learning as question answering. arXiv preprint arXiv:1806.08730, 2018.

2018p

- (Peters et al., 2018) ⇒ Peters, M. E., Neurnann, M., Iyyer, M., Gardner, M., Clark, C., Lee, K., and Zettlernoyer, L. Deep contextualized word representations. arXiv preprint arXiv:1802.05365, 2018.

2018q

- (Ramachandran et al., 2018) ⇒ Ramachandran, R, Liu, P. J., and Le, Q. V. Unsupervised pretraining for sequence to sequence learning. arXiv preprint arXiv:1611.02683, 2016.

2018r

- (Recht et al., 2018) ⇒ Recht, B., Roelofs, R., Schrnidt, L., and Shankar, V. Do cifar-lO classifiers generalize to cifar-lO? arXiv preprint arXiv:1806.00451,2018.

2018s

- (Reddy et al., 2018) ⇒ Reddy, S., Chen, D., and Manning, C. D. Coqa: A conversational question answering challenge. arXiv preprint arXiv:1808. 07042, 2018.

2018u

- (Subramanian et al., 2018) ⇒ Subramanian, S., Trischler, A., Bengio, Y., and Pal, C. J. Learning general purpose distributed sentence representations Via large scale rnulti-task learning. arXiv preprint arXiv:1804.00079, 2018.

2018t

- (Trichelair et al., 2018) ⇒ Trichelair, R, Ernarni, A., Cheung, J. C. K., Trischler, A., Suleman, K., and Diaz, F. On the evaluation of cornrnon-sense reasoning in natural language understanding. arXiv preprint arXiv:1811.01778, 2018.

2018v

- (Trinh & Le, 2018) ⇒ Trinh, T. H. and Le, Q. V. A simple method for commonsense reasoning. arXiv preprint arXiv:1806.02847, 2018.

2018w

- (Wang et al., 2018) ⇒ Alex Wang, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel R. Bowman. (2018). “GLUE: A Multi-Task Benchmark and Analysis Platform for Natural Language Understanding.” In: Proceedings of the 7th International Conference on Learning Representations (ICLR 2019).

2017a

- (Conneau et al., 2017a) ⇒ Conneau, A., Kiela, D., Schwenk, H., Barrault, L., and Bordes, A. Supervised learning of universal sentence representations from natural language inference data. arXiv preprint arXiv:1705.02364, 2017a.

2017b

- (Artetxe et al., 2017) ⇒ Mikel Artetxe, Gorka Labaka, Eneko Agirre, and Kyunghyun Cho. (2017). “Unsupervised Neural Machine Translation.” In: ePrint arXiv:1710.11041.

2017c

- (Conneau et al., 2017b) ⇒ Conneau, A., Larnple, G., Ranzato, M., Denoyer, L., and Jegou, H. Word translation without parallel data. arXiv preprint arXiv:1710.04087, 2017b.

2017d

- (Finn et al., 2017) ⇒ Finn, C., Abbeel, R, and Levine, S. Model-agnostic metalearning for fast adaptation of deep networks. arXiv preprint arXiv:1703.03400, 2017.

2017e

- (Hestness et al., 2017) ⇒ Hestness, J., Narang, S., Ardalani, N., Diamos, G., Jun, H., Kianinejad, H., Patwary, M., Ali, M., Yang, Y., and Zhou, Y. Deep learning scaling is predictable, empirically. arXiv preprint arXiv:1712.00409, 2017.

2017f

- (Jia & Liang, 2017) ⇒ Jia, R. and Liang, P. Adversarial examples for evaluating reading comprehension systems. arXiv preprint arXivJ 707. 07328, 2017.

2017g

- (Kaiser,2017) ⇒ Kaiser, L., Gomez, A. N., Shazeer, N., Vaswani, A., Parrnar, N., Jones, L., and Uszkoreit, J. One model to learn them all. arXiv preprint arXiv:1706.05I37, 2017.

2017h

- (Kirkpatrick et al., 2017) ⇒ Kirkpatrick, J ., Pascanu, R., Rabinowitz, N., Veness, J., Desjardins, G., Rusu, A. A., Milan, K., Quan, J., Rarnalho, T., GrabskaBarwinska, A., et al. Overcoming catastrophic forgetting in neural networks. Proceedings of the national academy of sciences, pp. 201611835, 2017.

2017i

- (Lake et al., 2017) ⇒ Lake, B. M., Ullman, T. D., Tenenbaum, J. B., and Gershman, S. J. Building machines that learn and think like people. Behavioral and Brain Sciences, 40, 2017.

2017j

- (Lample et al., 2017) ⇒ Lample, G., Conneau, A., Denoyer, L., and Ranzato, M. Unsupervised rnachine translation using monolingual corpora only. arXiv preprint arXiv:1711.00043, 2017.

2017k

- (McCann et al., 2017) ⇒ McCann, B., Bradbury, J., Xiong, C., and Socher, R. Learned in translation: Contextualized word vectors. In Advances in Neural Information Processing Systems, pp. 6294-6305, 2017.

2017l

- (Radford et al., 2017) ⇒ Radford, A., Jozefowicz, R., and Sutskever, I. Learning to generate reviews and discovering sentirnent. arXiv preprint arXiv:1704.01444, 2017.

2017m

- (Schwartz et al., 2017) ⇒ Schwartz, R., Sap, M., Konstas, I., Zilles, L., Choi, Y., and Smith, N. A. Story cloze task: Uw nlp system. In: Proceedings of the 2nd Workshop on Linking Models of Lexical, Sentential and Discourse-level Semantics, pp. 52-55, 2017.

2017n

- (See et al., 2017) ⇒ See, A., Liu, P. J., and Manning, C. D. Get to the point: Surnrnarization with pointer-generator networks. arXiv preprint arXiv:1704.04368, 2017.

2017o

- (Vaswani et al., 2017) ⇒ Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, L, and Polosukhin, I. Attention is all you need. In Advances in Neural Information Processing Systems, pp. 5998-6008, 2017.

2016a

- (Arnidei et al., 2016) ⇒ Arnodei, D., Ananthanarayanan, S., Anubhai, R., Bai, J., Battenberg, E., Case, C., Casper, J., Catanzaro, B., Cheng, Q., Chen, G., et a1. Deep speech 2: End-to-end speech recognition in english and mandarin. In: Proceedings of The International Conference on Machine Learning, pp. 173-182, 2016.

2016b

- (Ba et al., 2016) ⇒ [[Lei Jimmy Ba, Jamie Ryan Kiros, and Geoffrey E. Hinton. (2016). “Layer Normalization". In: arXiv eprint. abs/1607.06450.

2016c